Search Results for author: Xianglong Liu

Found 89 papers, 55 papers with code

IntraMix: Intra-Class Mixup Generation for Accurate Labels and Neighbors

no code implementations • 2 May 2024 • Shenghe Zheng, Hongzhi Wang, Xianglong Liu

Additionally, it establishes neighborhoods for the generated data by connecting them with data from the same class with high confidence, thereby enriching the neighborhoods of graphs.

How Good Are Low-bit Quantized LLaMA3 Models? An Empirical Study

1 code implementation • 22 Apr 2024 • Wei Huang, Xudong Ma, Haotong Qin, Xingyu Zheng, Chengtao Lv, Hong Chen, Jie Luo, Xiaojuan Qi, Xianglong Liu, Michele Magno

This exploration holds the potential to unveil new insights and challenges for low-bit quantization of LLaMA3 and other forthcoming LLMs, especially in addressing performance degradation problems that suffer in LLM compression.

BinaryDM: Towards Accurate Binarization of Diffusion Model

1 code implementation • 8 Apr 2024 • Xingyu Zheng, Haotong Qin, Xudong Ma, Mingyuan Zhang, Haojie Hao, Jiakai Wang, Zixiang Zhao, Jinyang Guo, Xianglong Liu

With the advancement of diffusion models (DMs) and the substantially increased computational requirements, quantization emerges as a practical solution to obtain compact and efficient low-bit DMs.

2023 Low-Power Computer Vision Challenge (LPCVC) Summary

no code implementations • 11 Mar 2024 • Leo Chen, Benjamin Boardley, Ping Hu, Yiru Wang, Yifan Pu, Xin Jin, Yongqiang Yao, Ruihao Gong, Bo Li, Gao Huang, Xianglong Liu, Zifu Wan, Xinwang Chen, Ning Liu, Ziyi Zhang, Dongping Liu, Ruijie Shan, Zhengping Che, Fachao Zhang, Xiaofeng Mou, Jian Tang, Maxim Chuprov, Ivan Malofeev, Alexander Goncharenko, Andrey Shcherbin, Arseny Yanchenko, Sergey Alyamkin, Xiao Hu, George K. Thiruvathukal, Yung Hsiang Lu

This article describes the 2023 IEEE Low-Power Computer Vision Challenge (LPCVC).

DB-LLM: Accurate Dual-Binarization for Efficient LLMs

no code implementations • 19 Feb 2024 • Hong Chen, Chengtao Lv, Liang Ding, Haotong Qin, Xiabin Zhou, Yifu Ding, Xuebo Liu, Min Zhang, Jinyang Guo, Xianglong Liu, DaCheng Tao

Large language models (LLMs) have significantly advanced the field of natural language processing, while the expensive memory and computation consumption impede their practical deployment.

Accurate LoRA-Finetuning Quantization of LLMs via Information Retention

1 code implementation • 8 Feb 2024 • Haotong Qin, Xudong Ma, Xingyu Zheng, Xiaoyang Li, Yang Zhang, Shouda Liu, Jie Luo, Xianglong Liu, Michele Magno

This paper proposes a novel IR-QLoRA for pushing quantized LLMs with LoRA to be highly accurate through information retention.

BiLLM: Pushing the Limit of Post-Training Quantization for LLMs

1 code implementation • 6 Feb 2024 • Wei Huang, Yangdong Liu, Haotong Qin, Ying Li, Shiming Zhang, Xianglong Liu, Michele Magno, Xiaojuan Qi

Pretrained large language models (LLMs) exhibit exceptional general language processing capabilities but come with significant demands on memory and computational resources.

Pre-trained Trojan Attacks for Visual Recognition

no code implementations • 23 Dec 2023 • Aishan Liu, Xinwei Zhang, Yisong Xiao, Yuguang Zhou, Siyuan Liang, Jiakai Wang, Xianglong Liu, Xiaochun Cao, DaCheng Tao

This paper aims to raise awareness of the potential threats associated with applying PVMs in practical scenarios.

TFMQ-DM: Temporal Feature Maintenance Quantization for Diffusion Models

1 code implementation • 27 Nov 2023 • Yushi Huang, Ruihao Gong, Jing Liu, Tianlong Chen, Xianglong Liu

Remarkably, our quantization approach, for the first time, achieves model performance nearly on par with the full-precision model under 4-bit weight quantization.

Adversarial Examples in the Physical World: A Survey

1 code implementation • 1 Nov 2023 • Jiakai Wang, Donghua Wang, Jin Hu, Siyang Wu, Tingsong Jiang, Wen Yao, Aishan Liu, Xianglong Liu

However, current research on physical adversarial examples (PAEs) lacks a comprehensive understanding of their unique characteristics, leading to limited significance and understanding.

MIR2: Towards Provably Robust Multi-Agent Reinforcement Learning by Mutual Information Regularization

no code implementations • 15 Oct 2023 • Simin Li, Ruixiao Xu, Jun Guo, Pu Feng, Jiakai Wang, Aishan Liu, Yaodong Yang, Xianglong Liu, Weifeng Lv

Existing max-min optimization techniques in robust MARL seek to enhance resilience by training agents against worst-case adversaries, but this becomes intractable as the number of agents grows, leading to exponentially increasing worst-case scenarios.

OHQ: On-chip Hardware-aware Quantization

no code implementations • 5 Sep 2023 • Wei Huang, Haotong Qin, Yangdong Liu, Jingzhuo Liang, Yulun Zhang, Ying Li, Xianglong Liu

Mixed-precision quantization leverages multiple bit-width architectures to unleash the accuracy and efficiency potential of quantized models.

RobustMQ: Benchmarking Robustness of Quantized Models

no code implementations • 4 Aug 2023 • Yisong Xiao, Aishan Liu, Tianyuan Zhang, Haotong Qin, Jinyang Guo, Xianglong Liu

Quantization has emerged as an essential technique for deploying deep neural networks (DNNs) on devices with limited resources.

Isolation and Induction: Training Robust Deep Neural Networks against Model Stealing Attacks

1 code implementation • 2 Aug 2023 • Jun Guo, Aishan Liu, Xingyu Zheng, Siyuan Liang, Yisong Xiao, Yichao Wu, Xianglong Liu

However, these defenses are now suffering problems of high inference computational overheads and unfavorable trade-offs between benign accuracy and stealing robustness, which challenges the feasibility of deployed models in practice.

SysNoise: Exploring and Benchmarking Training-Deployment System Inconsistency

no code implementations • 1 Jul 2023 • Yan Wang, Yuhang Li, Ruihao Gong, Aishan Liu, Yanfei Wang, Jian Hu, Yongqiang Yao, Yunchen Zhang, Tianzi Xiao, Fengwei Yu, Xianglong Liu

Extensive studies have shown that deep learning models are vulnerable to adversarial and natural noises, yet little is known about model robustness on noises caused by different system implementations.

Towards Benchmarking and Assessing Visual Naturalness of Physical World Adversarial Attacks

1 code implementation • CVPR 2023 • Simin Li, Shuing Zhang, Gujun Chen, Dong Wang, Pu Feng, Jiakai Wang, Aishan Liu, Xin Yi, Xianglong Liu

First, to benchmark attack naturalness, we contribute the first Physical Attack Naturalness (PAN) dataset with human rating and gaze.

Latent Imitator: Generating Natural Individual Discriminatory Instances for Black-Box Fairness Testing

no code implementations • 19 May 2023 • Yisong Xiao, Aishan Liu, Tianlin Li, Xianglong Liu

Machine learning (ML) systems have achieved remarkable performance across a wide area of applications.

Outlier Suppression+: Accurate quantization of large language models by equivalent and optimal shifting and scaling

1 code implementation • 18 Apr 2023 • Xiuying Wei, Yunchen Zhang, Yuhang Li, Xiangguo Zhang, Ruihao Gong, Jinyang Guo, Xianglong Liu

The channel-wise shifting aligns the center of each channel for removal of outlier asymmetry.

Towards Accurate Post-Training Quantization for Vision Transformer

no code implementations • 25 Mar 2023 • Yifu Ding, Haotong Qin, Qinghua Yan, Zhenhua Chai, Junjie Liu, Xiaolin Wei, Xianglong Liu

We find the main reasons lie in (1) the existing calibration metric is inaccurate in measuring the quantization influence for extremely low-bit representation, and (2) the existing quantization paradigm is unfriendly to the power-law distribution of Softmax.

X-Adv: Physical Adversarial Object Attacks against X-ray Prohibited Item Detection

1 code implementation • 19 Feb 2023 • Aishan Liu, Jun Guo, Jiakai Wang, Siyuan Liang, Renshuai Tao, Wenbo Zhou, Cong Liu, Xianglong Liu, DaCheng Tao

In this paper, we take the first step toward the study of adversarial attacks targeted at X-ray prohibited item detection, and reveal the serious threats posed by such attacks in this safety-critical scenario.

Attacking Cooperative Multi-Agent Reinforcement Learning by Adversarial Minority Influence

1 code implementation • 7 Feb 2023 • Simin Li, Jun Guo, Jingqiao Xiu, Pu Feng, Xin Yu, Aishan Liu, Wenjun Wu, Xianglong Liu

To achieve maximum deviation in victim policies under complex agent-wise interactions, our unilateral attack aims to characterize and maximize the impact of the adversary on the victims.

BiBench: Benchmarking and Analyzing Network Binarization

1 code implementation • 26 Jan 2023 • Haotong Qin, Mingyuan Zhang, Yifu Ding, Aoyu Li, Zhongang Cai, Ziwei Liu, Fisher Yu, Xianglong Liu

Network binarization emerges as one of the most promising compression approaches offering extraordinary computation and memory savings by minimizing the bit-width.

Exploring the Relationship Between Architectural Design and Adversarially Robust Generalization

no code implementations • CVPR 2023 • Aishan Liu, Shiyu Tang, Siyuan Liang, Ruihao Gong, Boxi Wu, Xianglong Liu, DaCheng Tao

In particular, we comprehensively evaluated 20 most representative adversarially trained architectures on ImageNette and CIFAR-10 datasets towards multiple l_p-norm adversarial attacks.

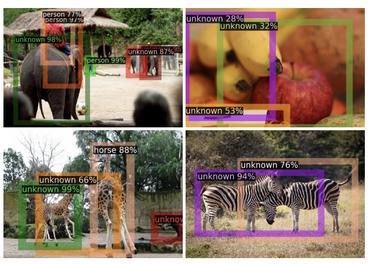

Annealing-Based Label-Transfer Learning for Open World Object Detection

1 code implementation • CVPR 2023 • Yuqing Ma, Hainan Li, Zhange Zhang, Jinyang Guo, Shanghang Zhang, Ruihao Gong, Xianglong Liu

To the best of our knowledge, this is the first OWOD work without manual unknown selection.

BiFSMNv2: Pushing Binary Neural Networks for Keyword Spotting to Real-Network Performance

1 code implementation • 13 Nov 2022 • Haotong Qin, Xudong Ma, Yifu Ding, Xiaoyang Li, Yang Zhang, Zejun Ma, Jiakai Wang, Jie Luo, Xianglong Liu

We highlight that benefiting from the compact architecture and optimized hardware kernel, BiFSMNv2 can achieve an impressive 25. 1x speedup and 20. 2x storage-saving on edge hardware.

Exploring the Relationship between Architecture and Adversarially Robust Generalization

no code implementations • 28 Sep 2022 • Aishan Liu, Shiyu Tang, Siyuan Liang, Ruihao Gong, Boxi Wu, Xianglong Liu, DaCheng Tao

Inparticular, we comprehensively evaluated 20 most representative adversarially trained architectures on ImageNette and CIFAR-10 datasets towards multiple `p-norm adversarial attacks.

Outlier Suppression: Pushing the Limit of Low-bit Transformer Language Models

1 code implementation • 27 Sep 2022 • Xiuying Wei, Yunchen Zhang, Xiangguo Zhang, Ruihao Gong, Shanghang Zhang, Qi Zhang, Fengwei Yu, Xianglong Liu

With the trends of large NLP models, the increasing memory and computation costs hinder their efficient deployment on resource-limited devices.

Improving Robust Fairness via Balance Adversarial Training

no code implementations • 15 Sep 2022 • ChunYu Sun, Chenye Xu, Chengyuan Yao, Siyuan Liang, Yichao Wu, Ding Liang, Xianglong Liu, Aishan Liu

Adversarial training (AT) methods are effective against adversarial attacks, yet they introduce severe disparity of accuracy and robustness between different classes, known as the robust fairness problem.

Towards Accurate Binary Neural Networks via Modeling Contextual Dependencies

1 code implementation • 3 Sep 2022 • Xingrun Xing, Yangguang Li, Wei Li, Wenrui Ding, Yalong Jiang, Yufeng Wang, Jing Shao, Chunlei Liu, Xianglong Liu

Second, to improve the robustness of binary models with contextual dependencies, we compute the contextual dynamic embeddings to determine the binarization thresholds in general binary convolutional blocks.

Hierarchical Perceptual Noise Injection for Social Media Fingerprint Privacy Protection

1 code implementation • 23 Aug 2022 • Simin Li, Huangxinxin Xu, Jiakai Wang, Aishan Liu, Fazhi He, Xianglong Liu, DaCheng Tao

The threat of fingerprint leakage from social media raises a strong desire for anonymizing shared images while maintaining image qualities, since fingerprints act as a lifelong individual biometric password.

Frequency Domain Model Augmentation for Adversarial Attack

2 code implementations • 12 Jul 2022 • Yuyang Long, Qilong Zhang, Boheng Zeng, Lianli Gao, Xianglong Liu, Jian Zhang, Jingkuan Song

Specifically, we apply a spectrum transformation to the input and thus perform the model augmentation in the frequency domain.

Defensive Patches for Robust Recognition in the Physical World

1 code implementation • CVPR 2022 • Jiakai Wang, Zixin Yin, Pengfei Hu, Aishan Liu, Renshuai Tao, Haotong Qin, Xianglong Liu, DaCheng Tao

For the generalization against diverse noises, we inject class-specific identifiable patterns into a confined local patch prior, so that defensive patches could preserve more recognizable features towards specific classes, leading models for better recognition under noises.

Delving into the Estimation Shift of Batch Normalization in a Network

1 code implementation • CVPR 2022 • Lei Huang, Yi Zhou, Tian Wang, Jie Luo, Xianglong Liu

We define the estimation shift magnitude of BN to quantitatively measure the difference between its estimated population statistics and expected ones.

BiBERT: Accurate Fully Binarized BERT

1 code implementation • ICLR 2022 • Haotong Qin, Yifu Ding, Mingyuan Zhang, Qinghua Yan, Aishan Liu, Qingqing Dang, Ziwei Liu, Xianglong Liu

The large pre-trained BERT has achieved remarkable performance on Natural Language Processing (NLP) tasks but is also computation and memory expensive.

QDrop: Randomly Dropping Quantization for Extremely Low-bit Post-Training Quantization

2 code implementations • 11 Mar 2022 • Xiuying Wei, Ruihao Gong, Yuhang Li, Xianglong Liu, Fengwei Yu

With QDROP, the limit of PTQ is pushed to the 2-bit activation for the first time and the accuracy boost can be up to 51. 49%.

Practical Evaluation of Adversarial Robustness via Adaptive Auto Attack

1 code implementation • CVPR 2022 • Ye Liu, Yaya Cheng, Lianli Gao, Xianglong Liu, Qilong Zhang, Jingkuan Song

Specifically, by observing that adversarial examples to a specific defense model follow some regularities in their starting points, we design an Adaptive Direction Initialization strategy to speed up the evaluation.

BiFSMN: Binary Neural Network for Keyword Spotting

1 code implementation • 14 Feb 2022 • Haotong Qin, Xudong Ma, Yifu Ding, Xiaoyang Li, Yang Zhang, Yao Tian, Zejun Ma, Jie Luo, Xianglong Liu

Then, to allow the instant and adaptive accuracy-efficiency trade-offs at runtime, we also propose a Thinnable Binarization Architecture to further liberate the acceleration potential of the binarized network from the topology perspective.

Excitement Surfeited Turns to Errors: Deep Learning Testing Framework Based on Excitable Neurons

1 code implementation • 12 Feb 2022 • Haibo Jin, Ruoxi Chen, Haibin Zheng, Jinyin Chen, Yao Cheng, Yue Yu, Xianglong Liu

By maximizing the number of excitable neurons concerning various wrong behaviors of models, DeepSensor can generate testing examples that effectively trigger more errors due to adversarial inputs, polluted data and incomplete training.

Revisiting Open World Object Detection

1 code implementation • 3 Jan 2022 • Xiaowei Zhao, Xianglong Liu, Yifan Shen, Yixuan Qiao, Yuqing Ma, Duorui Wang

Open World Object Detection (OWOD), simulating the real dynamic world where knowledge grows continuously, attempts to detect both known and unknown classes and incrementally learn the identified unknown ones.

Exploring Endogenous Shift for Cross-Domain Detection: A Large-Scale Benchmark and Perturbation Suppression Network

1 code implementation • CVPR 2022 • Renshuai Tao, Hainan Li, Tianbo Wang, Yanlu Wei, Yifu Ding, Bowei Jin, Hongping Zhi, Xianglong Liu, Aishan Liu

To handle the endogenous shift, we further introduce the Perturbation Suppression Network (PSN), motivated by the fact that this shift is mainly caused by two types of perturbations: category-dependent and category-independent ones.

Distribution-sensitive Information Retention for Accurate Binary Neural Network

no code implementations • 25 Sep 2021 • Haotong Qin, Xiangguo Zhang, Ruihao Gong, Yifu Ding, Yi Xu, Xianglong Liu

We present a novel Distribution-sensitive Information Retention Network (DIR-Net) that retains the information in the forward and backward propagation by improving internal propagation and introducing external representations.

Harnessing Perceptual Adversarial Patches for Crowd Counting

1 code implementation • 16 Sep 2021 • Shunchang Liu, Jiakai Wang, Aishan Liu, Yingwei Li, Yijie Gao, Xianglong Liu, DaCheng Tao

Crowd counting, which has been widely adopted for estimating the number of people in safety-critical scenes, is shown to be vulnerable to adversarial examples in the physical world (e. g., adversarial patches).

RobustART: Benchmarking Robustness on Architecture Design and Training Techniques

1 code implementation • 11 Sep 2021 • Shiyu Tang, Ruihao Gong, Yan Wang, Aishan Liu, Jiakai Wang, Xinyun Chen, Fengwei Yu, Xianglong Liu, Dawn Song, Alan Yuille, Philip H. S. Torr, DaCheng Tao

Thus, we propose RobustART, the first comprehensive Robustness investigation benchmark on ImageNet regarding ARchitecture design (49 human-designed off-the-shelf architectures and 1200+ networks from neural architecture search) and Training techniques (10+ techniques, e. g., data augmentation) towards diverse noises (adversarial, natural, and system noises).

Diverse Sample Generation: Pushing the Limit of Generative Data-free Quantization

1 code implementation • 1 Sep 2021 • Haotong Qin, Yifu Ding, Xiangguo Zhang, Jiakai Wang, Xianglong Liu, Jiwen Lu

We first give a theoretical analysis that the diversity of synthetic samples is crucial for the data-free quantization, while in existing approaches, the synthetic data completely constrained by BN statistics experimentally exhibit severe homogenization at distribution and sample levels.

Towards Real-world X-ray Security Inspection: A High-Quality Benchmark and Lateral Inhibition Module for Prohibited Items Detection

1 code implementation • ICCV 2021 • Renshuai Tao, Yanlu Wei, Xiangjian Jiang, Hainan Li, Haotong Qin, Jiakai Wang, Yuqing Ma, Libo Zhang, Xianglong Liu

In this work, we first present a High-quality X-ray (HiXray) security inspection image dataset, which contains 102, 928 common prohibited items of 8 categories.

Towards Real-World Prohibited Item Detection: A Large-Scale X-ray Benchmark

1 code implementation • ICCV 2021 • Boying Wang, Libo Zhang, Longyin Wen, Xianglong Liu, Yanjun Wu

Towards real-world prohibited item detection, we collect a large-scale dataset, named as PIDray, which covers various cases in real-world scenarios for prohibited item detection, especially for deliberately hidden items.

Delving Deep into the Generalization of Vision Transformers under Distribution Shifts

1 code implementation • CVPR 2022 • Chongzhi Zhang, Mingyuan Zhang, Shanghang Zhang, Daisheng Jin, Qiang Zhou, Zhongang Cai, Haiyu Zhao, Xianglong Liu, Ziwei Liu

By comprehensively investigating these GE-ViTs and comparing with their corresponding CNN models, we observe: 1) For the enhanced model, larger ViTs still benefit more for the OOD generalization.

Multi-Pretext Attention Network for Few-shot Learning with Self-supervision

2 code implementations • 10 Mar 2021 • Hainan Li, Renshuai Tao, Jun Li, Haotong Qin, Yifu Ding, Shuo Wang, Xianglong Liu

Self-supervised learning is emerged as an efficient method to utilize unlabeled data.

Over-sampling De-occlusion Attention Network for Prohibited Items Detection in Noisy X-ray Images

1 code implementation • 1 Mar 2021 • Renshuai Tao, Yanlu Wei, Hainan Li, Aishan Liu, Yifu Ding, Haotong Qin, Xianglong Liu

The images are gathered from an airport and these prohibited items are annotated manually by professional inspectors, which can be used as a benchmark for model training and further facilitate future research.

Diversifying Sample Generation for Accurate Data-Free Quantization

no code implementations • CVPR 2021 • Xiangguo Zhang, Haotong Qin, Yifu Ding, Ruihao Gong, Qinghua Yan, Renshuai Tao, Yuhang Li, Fengwei Yu, Xianglong Liu

Unfortunately, we find that in practice, the synthetic data identically constrained by BN statistics suffers serious homogenization at both distribution level and sample level and further causes a significant performance drop of the quantized model.

Dual Attention Suppression Attack: Generate Adversarial Camouflage in Physical World

1 code implementation • CVPR 2021 • Jiakai Wang, Aishan Liu, Zixin Yin, Shunchang Liu, Shiyu Tang, Xianglong Liu

Deep learning models are vulnerable to adversarial examples.

A Comprehensive Evaluation Framework for Deep Model Robustness

no code implementations • 24 Jan 2021 • Jun Guo, Wei Bao, Jiakai Wang, Yuqing Ma, Xinghai Gao, Gang Xiao, Aishan Liu, Jian Dong, Xianglong Liu, Wenjun Wu

To mitigate this problem, we establish a model robustness evaluation framework containing 23 comprehensive and rigorous metrics, which consider two key perspectives of adversarial learning (i. e., data and model).

Towards Overcoming False Positives in Visual Relationship Detection

no code implementations • 23 Dec 2020 • Daisheng Jin, Xiao Ma, Chongzhi Zhang, Yizhuo Zhou, Jiashu Tao, Mingyuan Zhang, Haiyu Zhao, Shuai Yi, Zhoujun Li, Xianglong Liu, Hongsheng Li

We observe that during training, the relationship proposal distribution is highly imbalanced: most of the negative relationship proposals are easy to identify, e. g., the inaccurate object detection, which leads to the under-fitting of low-frequency difficult proposals.

Towards Defending Multiple $\ell_p$-norm Bounded Adversarial Perturbations via Gated Batch Normalization

1 code implementation • 3 Dec 2020 • Aishan Liu, Shiyu Tang, Xinyun Chen, Lei Huang, Haotong Qin, Xianglong Liu, DaCheng Tao

In this paper, we observe that different $\ell_p$ bounded adversarial perturbations induce different statistical properties that can be separated and characterized by the statistics of Batch Normalization (BN).

BiPointNet: Binary Neural Network for Point Clouds

1 code implementation • ICLR 2021 • Haotong Qin, Zhongang Cai, Mingyuan Zhang, Yifu Ding, Haiyu Zhao, Shuai Yi, Xianglong Liu, Hao Su

To alleviate the resource constraint for real-time point cloud applications that run on edge devices, in this paper we present BiPointNet, the first model binarization approach for efficient deep learning on point clouds.

Patch-wise Attack for Fooling Deep Neural Network

4 code implementations • ECCV 2020 • Lianli Gao, Qilong Zhang, Jingkuan Song, Xianglong Liu, Heng Tao Shen

By adding human-imperceptible noise to clean images, the resultant adversarial examples can fool other unknown models.

Spatiotemporal Attacks for Embodied Agents

1 code implementation • ECCV 2020 • Aishan Liu, Tairan Huang, Xianglong Liu, Yitao Xu, Yuqing Ma, Xinyun Chen, Stephen J. Maybank, DaCheng Tao

Adversarial attacks are valuable for providing insights into the blind-spots of deep learning models and help improve their robustness.

Bias-based Universal Adversarial Patch Attack for Automatic Check-out

1 code implementation • ECCV 2020 • Aishan Liu, Jiakai Wang, Xianglong Liu, Bowen Cao, Chongzhi Zhang, Hang Yu

To address the problem, this paper proposes a bias-based framework to generate class-agnostic universal adversarial patches with strong generalization ability, which exploits both the perceptual and semantic bias of models.

Occluded Prohibited Items Detection: an X-ray Security Inspection Benchmark and De-occlusion Attention Module

2 code implementations • 18 Apr 2020 • Yanlu Wei, Renshuai Tao, Zhangjie Wu, Yuqing Ma, Libo Zhang, Xianglong Liu

Furthermore, to deal with the occlusion in X-ray images detection, we propose the De-occlusion Attention Module (DOAM), a plug-and-play module that can be easily inserted into and thus promote most popular detectors.

Binary Neural Networks: A Survey

2 code implementations • 31 Mar 2020 • Haotong Qin, Ruihao Gong, Xianglong Liu, Xiao Bai, Jingkuan Song, Nicu Sebe

The binary neural network, largely saving the storage and computation, serves as a promising technique for deploying deep models on resource-limited devices.

Stein Variational Inference for Discrete Distributions

no code implementations • 1 Mar 2020 • Jun Han, Fan Ding, Xianglong Liu, Lorenzo Torresani, Jian Peng, Qiang Liu

In addition, such transform can be straightforwardly employed in gradient-free kernelized Stein discrepancy to perform goodness-of-fit (GOF) test on discrete distributions.

Stratified Rule-Aware Network for Abstract Visual Reasoning

2 code implementations • 17 Feb 2020 • Sheng Hu, Yuqing Ma, Xianglong Liu, Yanlu Wei, Shihao Bai

We further point out the severe defects existing in the popular RAVEN dataset for RPM test, which prevent from the fair evaluation of the abstract reasoning ability.

Unbiased Scene Graph Generation via Rich and Fair Semantic Extraction

no code implementations • 1 Feb 2020 • Bin Wen, Jie Luo, Xianglong Liu, Lei Huang

Extracting graph representation of visual scenes in image is a challenging task in computer vision.

Towards Unified INT8 Training for Convolutional Neural Network

no code implementations • CVPR 2020 • Feng Zhu, Ruihao Gong, Fengwei Yu, Xianglong Liu, Yanfei Wang, Zhelong Li, Xiuqi Yang, Junjie Yan

In this paper, we give an attempt to build a unified 8-bit (INT8) training framework for common convolutional neural networks from the aspects of both accuracy and speed.

Fast and Incremental Loop Closure Detection Using Proximity Graphs

1 code implementation • 25 Nov 2019 • Shan An, Guangfu Che, Fangru Zhou, Xianglong Liu, Xin Ma, Yu Chen

Visual loop closure detection, which can be considered as an image retrieval task, is an important problem in SLAM (Simultaneous Localization and Mapping) systems.

Region-wise Generative Adversarial ImageInpainting for Large Missing Areas

1 code implementation • 27 Sep 2019 • Yuqing Ma, Xianglong Liu, Shihao Bai, Lei Wang, Aishan Liu, DaCheng Tao, Edwin Hancock

To address these problems, we propose a generic inpainting framework capable of handling with incomplete images on both continuous and discontinuous large missing areas, in an adversarial manner.

Balanced Binary Neural Networks with Gated Residual

1 code implementation • 26 Sep 2019 • Mingzhu Shen, Xianglong Liu, Ruihao Gong, Kai Han

In this paper, we attempt to maintain the information propagated in the forward process and propose a Balanced Binary Neural Networks with Gated Residual (BBG for short).

Ranked #972 on

Image Classification

on ImageNet

Ranked #972 on

Image Classification

on ImageNet

Attention Convolutional Binary Neural Tree for Fine-Grained Visual Categorization

2 code implementations • CVPR 2020 • Ruyi Ji, Longyin Wen, Libo Zhang, Dawei Du, Yanjun Wu, Chen Zhao, Xianglong Liu, Feiyue Huang

Specifically, we incorporate convolutional operations along edges of the tree structure, and use the routing functions in each node to determine the root-to-leaf computational paths within the tree.

Ranked #33 on

Fine-Grained Image Classification

on Stanford Cars

Ranked #33 on

Fine-Grained Image Classification

on Stanford Cars

Fine-Grained Image Classification

Fine-Grained Image Classification

Fine-Grained Visual Categorization

Fine-Grained Visual Categorization

Forward and Backward Information Retention for Accurate Binary Neural Networks

2 code implementations • CVPR 2020 • Haotong Qin, Ruihao Gong, Xianglong Liu, Mingzhu Shen, Ziran Wei, Fengwei Yu, Jingkuan Song

Our empirical study indicates that the quantization brings information loss in both forward and backward propagation, which is the bottleneck of training accurate binary neural networks.

Training Robust Deep Neural Networks via Adversarial Noise Propagation

no code implementations • 19 Sep 2019 • Aishan Liu, Xianglong Liu, Chongzhi Zhang, Hang Yu, Qiang Liu, DaCheng Tao

Various adversarial defense methods have accordingly been developed to improve adversarial robustness for deep models.

Interpreting and Improving Adversarial Robustness of Deep Neural Networks with Neuron Sensitivity

no code implementations • 16 Sep 2019 • Chongzhi Zhang, Aishan Liu, Xianglong Liu, Yitao Xu, Hang Yu, Yuqing Ma, Tianlin Li

In this paper, we first draw the close connection between adversarial robustness and neuron sensitivities, as sensitive neurons make the most non-trivial contributions to model predictions in the adversarial setting.

PDA: Progressive Data Augmentation for General Robustness of Deep Neural Networks

no code implementations • 11 Sep 2019 • Hang Yu, Aishan Liu, Xianglong Liu, Gengchao Li, Ping Luo, Ran Cheng, Jichen Yang, Chongzhi Zhang

In other words, DNNs trained with PDA are able to obtain more robustness against both adversarial attacks as well as common corruptions than the recent state-of-the-art methods.

Differentiable Soft Quantization: Bridging Full-Precision and Low-Bit Neural Networks

2 code implementations • ICCV 2019 • Ruihao Gong, Xianglong Liu, Shenghu Jiang, Tianxiang Li, Peng Hu, Jiazhen Lin, Fengwei Yu, Junjie Yan

Hardware-friendly network quantization (e. g., binary/uniform quantization) can efficiently accelerate the inference and meanwhile reduce memory consumption of the deep neural networks, which is crucial for model deployment on resource-limited devices like mobile phones.

Query-Adaptive Hash Code Ranking for Large-Scale Multi-View Visual Search

no code implementations • 18 Apr 2019 • Xianglong Liu, Lei Huang, Cheng Deng, Bo Lang, DaCheng Tao

For each hash table, a query-adaptive bitwise weighting is introduced to alleviate the quantization loss by simultaneously exploiting the quality of hash functions and their complement for nearest neighbor search.

Active Multi-Kernel Domain Adaptation for Hyperspectral Image Classification

no code implementations • 10 Apr 2019 • Cheng Deng, Xianglong Liu, Chao Li, DaCheng Tao

Recent years have witnessed the quick progress of the hyperspectral images (HSI) classification.

Triplet-Based Deep Hashing Network for Cross-Modal Retrieval

no code implementations • 4 Apr 2019 • Cheng Deng, Zhaojia Chen, Xianglong Liu, Xinbo Gao, DaCheng Tao

Given the benefits of its low storage requirements and high retrieval efficiency, hashing has recently received increasing attention.

Active Transfer Learning Network: A Unified Deep Joint Spectral-Spatial Feature Learning Model For Hyperspectral Image Classification

no code implementations • 4 Apr 2019 • Cheng Deng, Yumeng Xue, Xianglong Liu, Chao Li, DaCheng Tao

The advantages of our proposed method are threefold: 1) the network can be effectively trained using only limited labeled samples with the help of novel active learning strategies; 2) the network is flexible and scalable enough to function across various transfer situations, including cross-dataset and intra-image; 3) the learned deep joint spectral-spatial feature representation is more generic and robust than many joint spectral-spatial feature representation.

Coupled CycleGAN: Unsupervised Hashing Network for Cross-Modal Retrieval

no code implementations • 6 Mar 2019 • Chao Li, Cheng Deng, Lei Wang, De Xie, Xianglong Liu

In recent years, hashing has attracted more and more attention owing to its superior capacity of low storage cost and high query efficiency in large-scale cross-modal retrieval.

Direct Shape Regression Networks for End-to-End Face Alignment

no code implementations • CVPR 2018 • Xin Miao, Xian-Tong Zhen, Xianglong Liu, Cheng Deng, Vassilis Athitsos, Heng Huang

In this paper, we propose the direct shape regression network (DSRN) for end-to-end face alignment by jointly handling the aforementioned challenges in a unified framework.

Ranked #16 on

Face Alignment

on AFLW-19

Ranked #16 on

Face Alignment

on AFLW-19

Fast Subspace Clustering Based on the Kronecker Product

no code implementations • 15 Mar 2018 • Lei Zhou, Xiao Bai, Xianglong Liu, Jun Zhou, Hancock Edwin

Therefore, the efficiency and scalability of traditional spectral clustering methods can not be guaranteed for large scale datasets.

Orthogonal Weight Normalization: Solution to Optimization overMultiple Dependent Stiefel Manifolds in Deep Neural Networks

1 code implementation • The Thirty-Second AAAI Conferenceon Artificial Intelligence 2018 • Lei Huang, Xianglong Liu, Bo Lang, Adams Wei Yu, Yongliang Wang, Bo Li

In this paper, we generalize such square orthogonal matrix to orthogonal rectangular matrix and formulating this problem in feed-forward Neural Networks (FNNs) as Optimization over Multiple Dependent Stiefel Manifolds (OMDSM).

Projection Based Weight Normalization for Deep Neural Networks

1 code implementation • 6 Oct 2017 • Lei Huang, Xianglong Liu, Bo Lang, Bo Li

We conduct comprehensive experiments on several widely-used image datasets including CIFAR-10, CIFAR-100, SVHN and ImageNet for supervised learning over the state-of-the-art convolutional neural networks, such as Inception, VGG and residual networks.

Centered Weight Normalization in Accelerating Training of Deep Neural Networks

1 code implementation • ICCV 2017 • Lei Huang, Xianglong Liu, Yang Liu, Bo Lang, DaCheng Tao

Training deep neural networks is difficult for the pathological curvature problem.

Orthogonal Weight Normalization: Solution to Optimization over Multiple Dependent Stiefel Manifolds in Deep Neural Networks

1 code implementation • 16 Sep 2017 • Lei Huang, Xianglong Liu, Bo Lang, Adams Wei Yu, Yongliang Wang, Bo Li

In this paper, we generalize such square orthogonal matrix to orthogonal rectangular matrix and formulating this problem in feed-forward Neural Networks (FNNs) as Optimization over Multiple Dependent Stiefel Manifolds (OMDSM).

Deep Sketch Hashing: Fast Free-hand Sketch-Based Image Retrieval

1 code implementation • CVPR 2017 • Li Liu, Fumin Shen, Yuming Shen, Xianglong Liu, Ling Shao

Free-hand sketch-based image retrieval (SBIR) is a specific cross-view retrieval task, in which queries are abstract and ambiguous sketches while the retrieval database is formed with natural images.

Multilinear Hyperplane Hashing

no code implementations • CVPR 2016 • Xianglong Liu, Xinjie Fan, Cheng Deng, Zhujin Li, Hao Su, DaCheng Tao

Despite its successful progress in classic point-to-point search, there are few studies regarding point-to-hyperplane search, which has strong practical capabilities of scaling up in many applications like active learning with SVMs.

Multi-View Complementary Hash Tables for Nearest Neighbor Search

no code implementations • ICCV 2015 • Xianglong Liu, Lei Huang, Cheng Deng, Jiwen Lu, Bo Lang

have enjoyed the benefits of complementary hash tables and information fusion over multiple views.

Collaborative Hashing

no code implementations • CVPR 2014 • Xianglong Liu, Junfeng He, Cheng Deng, Bo Lang

Hashing technique has become a promising approach for fast similarity search.

Hash Bit Selection: A Unified Solution for Selection Problems in Hashing

no code implementations • CVPR 2013 • Xianglong Liu, Junfeng He, Bo Lang, Shih-Fu Chang

We represent the bit pool as a vertx- and edge-weighted graph with the candidate bits as vertices.