Search Results for author: Thomas M. Moerland

Found 19 papers, 11 papers with code

Explicitly Disentangled Representations in Object-Centric Learning

1 code implementation • 18 Jan 2024 • Riccardo Majellaro, Jonathan Collu, Aske Plaat, Thomas M. Moerland

Extracting structured representations from raw visual data is an important and long-standing challenge in machine learning.

EduGym: An Environment and Notebook Suite for Reinforcement Learning Education

1 code implementation • 17 Nov 2023 • Thomas M. Moerland, Matthias Müller-Brockhausen, Zhao Yang, Andrius Bernatavicius, Koen Ponse, Tom Kouwenhoven, Andreas Sauter, Michiel van der Meer, Bram Renting, Aske Plaat

To solve this issue we introduce EduGym, a set of educational reinforcement learning environments and associated interactive notebooks tailored for education.

Are LSTMs Good Few-Shot Learners?

1 code implementation • 22 Oct 2023 • Mike Huisman, Thomas M. Moerland, Aske Plaat, Jan N. van Rijn

Meta-learning overcomes this limitation by learning how to learn.

What model does MuZero learn?

no code implementations • 1 Jun 2023 • Jinke He, Thomas M. Moerland, Frans A. Oliehoek

Model-based reinforcement learning has drawn considerable interest in recent years, given its promise to improve sample efficiency.

First Go, then Post-Explore: the Benefits of Post-Exploration in Intrinsic Motivation

no code implementations • 6 Dec 2022 • Zhao Yang, Thomas M. Moerland, Mike Preuss, Aske Plaat

In this paper, we present a clear ablation study of post-exploration in a general intrinsically motivated goal exploration process (IMGEP) framework, that the Go-Explore paper did not show.

Continuous Episodic Control

no code implementations • 28 Nov 2022 • Zhao Yang, Thomas M. Moerland, Mike Preuss, Aske Plaat

Therefore, this paper introduces Continuous Episodic Control (CEC), a novel non-parametric episodic memory algorithm for sequential decision making in problems with a continuous action space.

When to Go, and When to Explore: The Benefit of Post-Exploration in Intrinsic Motivation

no code implementations • 29 Mar 2022 • Zhao Yang, Thomas M. Moerland, Mike Preuss, Aske Plaat

Go-Explore achieved breakthrough performance on challenging reinforcement learning (RL) tasks with sparse rewards.

On Credit Assignment in Hierarchical Reinforcement Learning

1 code implementation • 7 Mar 2022 • Joery A. de Vries, Thomas M. Moerland, Aske Plaat

To improve our fundamental understanding of HRL, we investigate hierarchical credit assignment from the perspective of conventional multistep reinforcement learning.

Hierarchical Reinforcement Learning

Hierarchical Reinforcement Learning

reinforcement-learning

+1

reinforcement-learning

+1

Visualizing MuZero Models

1 code implementation • ICML Workshop URL 2021 • Joery A. de Vries, Ken S. Voskuil, Thomas M. Moerland, Aske Plaat

In contrast to standard forward dynamics models that predict a full next state, value equivalent models are trained to predict a future value, thereby emphasizing value relevant information in the representations.

Model-based Reinforcement Learning: A Survey

no code implementations • 30 Jun 2020 • Thomas M. Moerland, Joost Broekens, Aske Plaat, Catholijn M. Jonker

Two key approaches to this problem are reinforcement learning (RL) and planning.

A Unifying Framework for Reinforcement Learning and Planning

no code implementations • 26 Jun 2020 • Thomas M. Moerland, Joost Broekens, Aske Plaat, Catholijn M. Jonker

Therefore, this paper presents a unifying algorithmic framework for reinforcement learning and planning (FRAP), which identifies underlying dimensions on which MDP planning and learning algorithms have to decide.

The Second Type of Uncertainty in Monte Carlo Tree Search

1 code implementation • 19 May 2020 • Thomas M. Moerland, Joost Broekens, Aske Plaat, Catholijn M. Jonker

Monte Carlo Tree Search (MCTS) efficiently balances exploration and exploitation in tree search based on count-derived uncertainty.

Think Too Fast Nor Too Slow: The Computational Trade-off Between Planning And Reinforcement Learning

1 code implementation • 15 May 2020 • Thomas M. Moerland, Anna Deichler, Simone Baldi, Joost Broekens, Catholijn M. Jonker

Planning and reinforcement learning are two key approaches to sequential decision making.

The Potential of the Return Distribution for Exploration in RL

1 code implementation • 11 Jun 2018 • Thomas M. Moerland, Joost Broekens, Catholijn M. Jonker

This paper studies the potential of the return distribution for exploration in deterministic reinforcement learning (RL) environments.

A0C: Alpha Zero in Continuous Action Space

2 code implementations • 24 May 2018 • Thomas M. Moerland, Joost Broekens, Aske Plaat, Catholijn M. Jonker

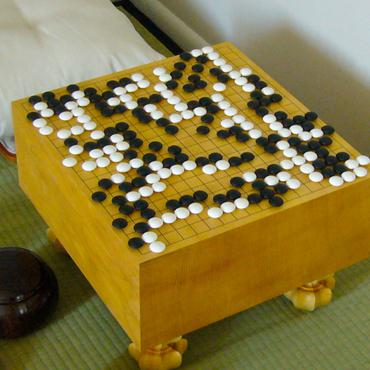

A core novelty of Alpha Zero is the interleaving of tree search and deep learning, which has proven very successful in board games like Chess, Shogi and Go.

Monte Carlo Tree Search for Asymmetric Trees

2 code implementations • 23 May 2018 • Thomas M. Moerland, Joost Broekens, Aske Plaat, Catholijn M. Jonker

Asymmetric termination of search trees introduces a type of uncertainty for which the standard upper confidence bound (UCB) formula does not account.

Efficient exploration with Double Uncertain Value Networks

no code implementations • 29 Nov 2017 • Thomas M. Moerland, Joost Broekens, Catholijn M. Jonker

This paper studies directed exploration for reinforcement learning agents by tracking uncertainty about the value of each available action.

Emotion in Reinforcement Learning Agents and Robots: A Survey

no code implementations • 15 May 2017 • Thomas M. Moerland, Joost Broekens, Catholijn M. Jonker

This article provides the first survey of computational models of emotion in reinforcement learning (RL) agents.

Learning Multimodal Transition Dynamics for Model-Based Reinforcement Learning

1 code implementation • 1 May 2017 • Thomas M. Moerland, Joost Broekens, Catholijn M. Jonker

In this paper we study how to learn stochastic, multimodal transition dynamics in reinforcement learning (RL) tasks.

Model-based Reinforcement Learning

Model-based Reinforcement Learning

reinforcement-learning

+2

reinforcement-learning

+2