Search Results for author: Nicholas Watters

Found 8 papers, 6 papers with code

Modeling Human Eye Movements with Neural Networks in a Maze-Solving Task

1 code implementation • 20 Dec 2022 • Jason Li, Nicholas Watters, Yingting, Wang, Hansem Sohn, Mehrdad Jazayeri

This not only provides a generative model of eye movements in this task but also suggests a computational theory for how humans solve the task, namely that humans use mental simulation.

Modular Object-Oriented Games: A Task Framework for Reinforcement Learning, Psychology, and Neuroscience

1 code implementation • 25 Feb 2021 • Nicholas Watters, Joshua Tenenbaum, Mehrdad Jazayeri

In recent years, trends towards studying simulated games have gained momentum in the fields of artificial intelligence, cognitive science, psychology, and neuroscience.

Unsupervised Model Selection for Variational Disentangled Representation Learning

no code implementations • ICLR 2020 • Sunny Duan, Loic Matthey, Andre Saraiva, Nicholas Watters, Christopher P. Burgess, Alexander Lerchner, Irina Higgins

Disentangled representations have recently been shown to improve fairness, data efficiency and generalisation in simple supervised and reinforcement learning tasks.

COBRA: Data-Efficient Model-Based RL through Unsupervised Object Discovery and Curiosity-Driven Exploration

1 code implementation • 22 May 2019 • Nicholas Watters, Loic Matthey, Matko Bosnjak, Christopher P. Burgess, Alexander Lerchner

Data efficiency and robustness to task-irrelevant perturbations are long-standing challenges for deep reinforcement learning algorithms.

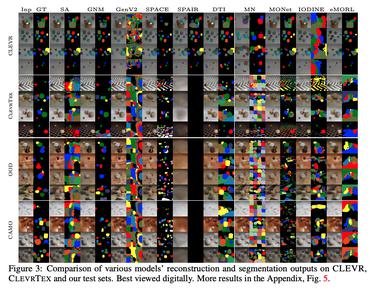

MONet: Unsupervised Scene Decomposition and Representation

5 code implementations • 22 Jan 2019 • Christopher P. Burgess, Loic Matthey, Nicholas Watters, Rishabh Kabra, Irina Higgins, Matt Botvinick, Alexander Lerchner

The ability to decompose scenes in terms of abstract building blocks is crucial for general intelligence.

Spatial Broadcast Decoder: A Simple Architecture for Learning Disentangled Representations in VAEs

2 code implementations • 21 Jan 2019 • Nicholas Watters, Loic Matthey, Christopher P. Burgess, Alexander Lerchner

We present a simple neural rendering architecture that helps variational autoencoders (VAEs) learn disentangled representations.

Visual Interaction Networks: Learning a Physics Simulator from Video

no code implementations • NeurIPS 2017 • Nicholas Watters, Daniel Zoran, Theophane Weber, Peter Battaglia, Razvan Pascanu, Andrea Tacchetti

We introduce the Visual Interaction Network, a general-purpose model for learning the dynamics of a physical system from raw visual observations.

Visual Interaction Networks

3 code implementations • 5 Jun 2017 • Nicholas Watters, Andrea Tacchetti, Theophane Weber, Razvan Pascanu, Peter Battaglia, Daniel Zoran

We found that from just six input video frames the Visual Interaction Network can generate accurate future trajectories of hundreds of time steps on a wide range of physical systems.