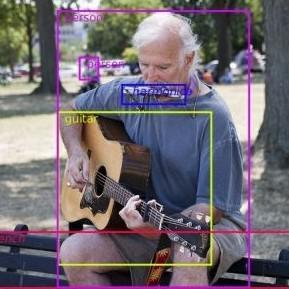

Zero-Shot Object Detection

26 papers with code • 7 benchmarks • 6 datasets

Zero-shot object detection (ZSD) is the task of object detection where no visual training data is available for some of the target object classes.

( Image credit: Zero-Shot Object Detection: Learning to Simultaneously Recognize and Localize Novel Concepts )

Libraries

Use these libraries to find Zero-Shot Object Detection models and implementationsLatest papers

T-Rex2: Towards Generic Object Detection via Text-Visual Prompt Synergy

Recognizing the complementary strengths and weaknesses of both text and visual prompts, we introduce T-Rex2 that synergizes both prompts within a single model through contrastive learning.

SeeDS: Semantic Separable Diffusion Synthesizer for Zero-shot Food Detection

To tackle this, we propose the Semantic Separable Diffusion Synthesizer (SeeDS) framework for Zero-Shot Food Detection (ZSFD).

ViLLA: Fine-Grained Vision-Language Representation Learning from Real-World Data

The first key contribution of this work is to demonstrate through systematic evaluations that as the pairwise complexity of the training dataset increases, standard VLMs struggle to learn region-attribute relationships, exhibiting performance degradations of up to 37% on retrieval tasks.

Scaling Open-Vocabulary Object Detection

However, with OWL-ST, we can scale to over 1B examples, yielding further large improvement: With an L/14 architecture, OWL-ST improves AP on LVIS rare classes, for which the model has seen no human box annotations, from 31. 2% to 44. 6% (43% relative improvement).

Multi-modal Queried Object Detection in the Wild

To address the learning inertia problem brought by the frozen detector, a vision conditioned masked language prediction strategy is proposed.

DoUnseen: Tuning-Free Class-Adaptive Object Detection of Unseen Objects for Robotic Grasping

In this work, we are interested in open sets where the number of classes is unknown, varying, and without pre-knowledge about the objects' types.

ZBS: Zero-shot Background Subtraction via Instance-level Background Modeling and Foreground Selection

However, previous unsupervised deep learning BGS algorithms perform poorly in sophisticated scenarios such as shadows or night lights, and they cannot detect objects outside the pre-defined categories.

Efficient Feature Distillation for Zero-shot Annotation Object Detection

We propose a new setting for detecting unseen objects called Zero-shot Annotation object Detection (ZAD).

Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection

To effectively fuse language and vision modalities, we conceptually divide a closed-set detector into three phases and propose a tight fusion solution, which includes a feature enhancer, a language-guided query selection, and a cross-modality decoder for cross-modality fusion.

Resolving Semantic Confusions for Improved Zero-Shot Detection

Zero-shot detection (ZSD) is a challenging task where we aim to recognize and localize objects simultaneously, even when our model has not been trained with visual samples of a few target ("unseen") classes.

MS COCO

MS COCO

LVIS

LVIS

PASCAL VOC 2007

PASCAL VOC 2007

MSCOCO

MSCOCO

RF100

RF100