Scene Graph Generation

110 papers with code • 5 benchmarks • 7 datasets

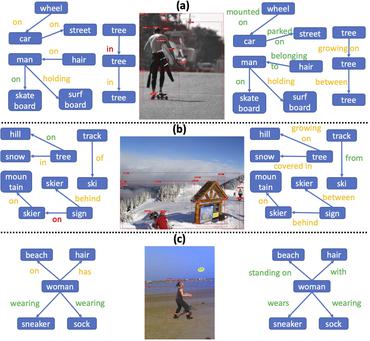

A scene graph is a structured representation of an image, where nodes in a scene graph correspond to object bounding boxes with their object categories, and edges correspond to their pairwise relationships between objects. The task of Scene Graph Generation is to generate a visually-grounded scene graph that most accurately correlates with an image.

Libraries

Use these libraries to find Scene Graph Generation models and implementationsLatest papers

Adaptive Visual Scene Understanding: Incremental Scene Graph Generation

To address the lack of continual learning methodologies in SGG, we introduce the comprehensive Continual ScenE Graph Generation (CSEGG) dataset along with 3 learning scenarios and 8 evaluation metrics.

Less is More: Toward Zero-Shot Local Scene Graph Generation via Foundation Models

To fill this gap, we present a new task called Local Scene Graph Generation.

Spatial-Temporal Knowledge-Embedded Transformer for Video Scene Graph Generation

In this work, we propose a spatial-temporal knowledge-embedded transformer (STKET) that incorporates the prior spatial-temporal knowledge into the multi-head cross-attention mechanism to learn more representative relationship representations.

Zero-Shot Scene Graph Generation via Triplet Calibration and Reduction

In our framework, a triplet calibration loss is first presented to regularize the representations of diverse triplets and to simultaneously excavate the unseen triplets in incompletely annotated training scene graphs.

Towards Addressing the Misalignment of Object Proposal Evaluation for Vision-Language Tasks via Semantic Grounding

Object proposal generation serves as a standard pre-processing step in Vision-Language (VL) tasks (image captioning, visual question answering, etc.).

Head-Tail Cooperative Learning Network for Unbiased Scene Graph Generation

We also propose a self-supervised learning approach to enhance the prediction ability of the tail-prefer feature representation branch by constraining tail-prefer predicate features.

RLIPv2: Fast Scaling of Relational Language-Image Pre-training

In this paper, we propose RLIPv2, a fast converging model that enables the scaling of relational pre-training to large-scale pseudo-labelled scene graph data.

Vision Relation Transformer for Unbiased Scene Graph Generation

Recent years have seen a growing interest in Scene Graph Generation (SGG), a comprehensive visual scene understanding task that aims to predict entity relationships using a relation encoder-decoder pipeline stacked on top of an object encoder-decoder backbone.

Compositional Feature Augmentation for Unbiased Scene Graph Generation

Specifically, we first decompose each relation triplet feature into two components: intrinsic feature and extrinsic feature, which correspond to the intrinsic characteristics and extrinsic contexts of a relation triplet, respectively.

Environment-Invariant Curriculum Relation Learning for Fine-Grained Scene Graph Generation

Then, we construct a class-balanced curriculum learning strategy to balance the different environments to remove the predicate imbalance.

MS COCO

MS COCO

Visual Genome

Visual Genome

VRD

VRD

3DSSG

3DSSG

3RScan

3RScan

PSG Dataset

PSG Dataset

4D-OR

4D-OR