Scene Graph Generation

110 papers with code • 5 benchmarks • 7 datasets

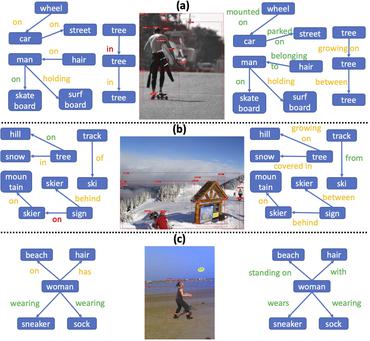

A scene graph is a structured representation of an image, where nodes in a scene graph correspond to object bounding boxes with their object categories, and edges correspond to their pairwise relationships between objects. The task of Scene Graph Generation is to generate a visually-grounded scene graph that most accurately correlates with an image.

Libraries

Use these libraries to find Scene Graph Generation models and implementationsLatest papers with no code

A Review and Efficient Implementation of Scene Graph Generation Metrics

Scene graph generation has emerged as a prominent research field in computer vision, witnessing significant advancements in the recent years.

Tri-modal Confluence with Temporal Dynamics for Scene Graph Generation in Operating Rooms

A comprehensive understanding of surgical scenes allows for monitoring of the surgical process, reducing the occurrence of accidents and enhancing efficiency for medical professionals.

AUG: A New Dataset and An Efficient Model for Aerial Image Urban Scene Graph Generation

To fill in the gap of the overhead view dataset, this paper constructs and releases an aerial image urban scene graph generation (AUG) dataset.

ORacle: Large Vision-Language Models for Knowledge-Guided Holistic OR Domain Modeling

This demonstrates ORacle's potential to significantly enhance the scalability and affordability of OR domain modeling and opens a pathway for future advancements in surgical data science.

SportsHHI: A Dataset for Human-Human Interaction Detection in Sports Videos

We hope that SportsHHI can stimulate research on human interaction understanding in videos and promote the development of spatio-temporal context modeling techniques in video visual relation detection.

Weakly-Supervised 3D Scene Graph Generation via Visual-Linguistic Assisted Pseudo-labeling

However, previous 3D scene graph generation methods utilize a fully supervised learning manner and require a large amount of entity-level annotation data of objects and relations, which is extremely resource-consuming and tedious to obtain.

From Pixels to Graphs: Open-Vocabulary Scene Graph Generation with Vision-Language Models

Scene graph generation (SGG) aims to parse a visual scene into an intermediate graph representation for downstream reasoning tasks.

Improving Scene Graph Generation with Relation Words' Debiasing in Vision-Language Models

After that, we ensemble VLMs with SGG models to enhance representation.

DSGG: Dense Relation Transformer for an End-to-end Scene Graph Generation

Scene graph generation aims to capture detailed spatial and semantic relationships between objects in an image, which is challenging due to incomplete labelling, long-tailed relationship categories, and relational semantic overlap.

Mapping High-level Semantic Regions in Indoor Environments without Object Recognition

Robots require a semantic understanding of their surroundings to operate in an efficient and explainable way in human environments.

MS COCO

MS COCO

Visual Genome

Visual Genome

VRD

VRD

3DSSG

3DSSG

3RScan

3RScan

PSG Dataset

PSG Dataset

4D-OR

4D-OR