Transformers Generalize DeepSets and Can be Extended to Graphs and Hypergraphs

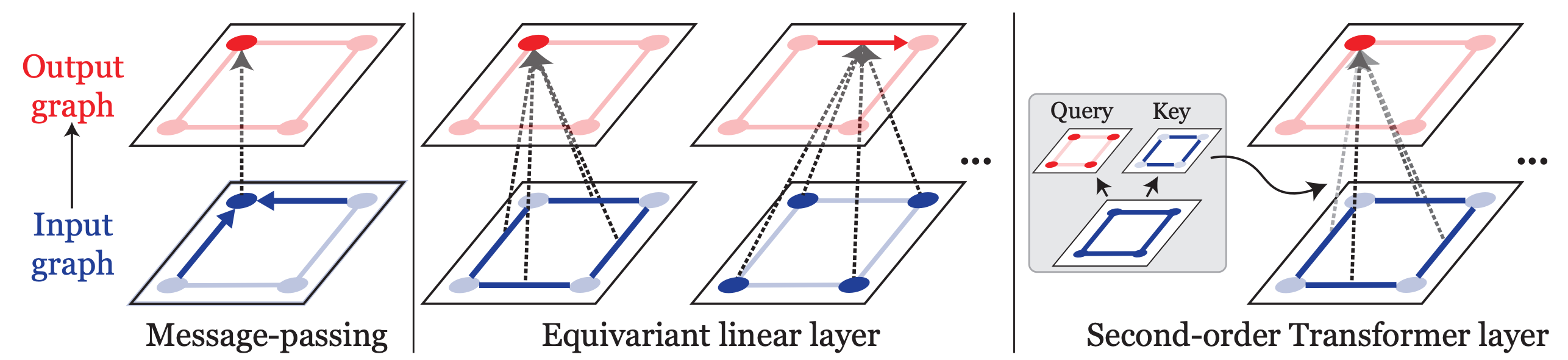

We present a generalization of Transformers to any-order permutation invariant data (sets, graphs, and hypergraphs). We begin by observing that Transformers generalize DeepSets, or first-order (set-input) permutation invariant MLPs. Then, based on recently characterized higher-order invariant MLPs, we extend the concept of self-attention to higher orders and propose higher-order Transformers for order-$k$ data ($k=2$ for graphs and $k>2$ for hypergraphs). Unfortunately, higher-order Transformers turn out to have prohibitive complexity $\mathcal{O}(n^{2k})$ to the number of input nodes $n$. To address this problem, we present sparse higher-order Transformers that have quadratic complexity to the number of input hyperedges, and further adopt the kernel attention approach to reduce the complexity to linear. In particular, we show that the sparse second-order Transformers with kernel attention are theoretically more expressive than message passing operations while having an asymptotically identical complexity. Our models achieve significant performance improvement over invariant MLPs and message-passing graph neural networks in large-scale graph regression and set-to-(hyper)graph prediction tasks. Our implementation is available at https://github.com/jw9730/hot.

PDF Abstract NeurIPS 2021 PDF NeurIPS 2021 AbstractCode

Results from the Paper

Ranked #5 on

Graph Regression

on PCQM4M-LSC

(Validation MAE metric)

Ranked #5 on

Graph Regression

on PCQM4M-LSC

(Validation MAE metric)

MovieLens

MovieLens

OGB-LSC

OGB-LSC