Neural Network Gaussian Processes by Increasing Depth

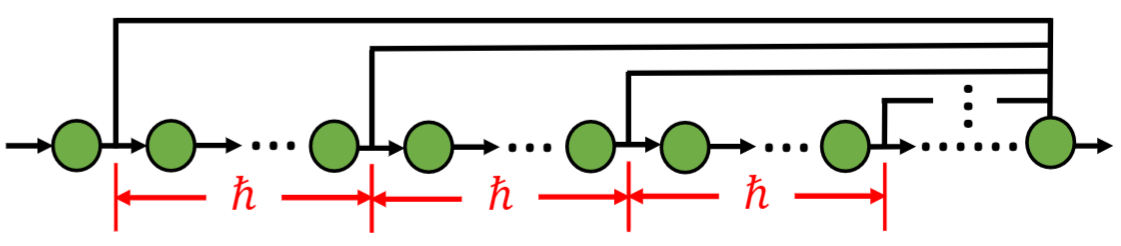

Recent years have witnessed an increasing interest in the correspondence between infinitely wide networks and Gaussian processes. Despite the effectiveness and elegance of the current neural network Gaussian process theory, to the best of our knowledge, all the neural network Gaussian processes are essentially induced by increasing width. However, in the era of deep learning, what concerns us more regarding a neural network is its depth as well as how depth impacts the behaviors of a network. Inspired by a width-depth symmetry consideration, we use a shortcut network to show that increasing the depth of a neural network can also give rise to a Gaussian process, which is a valuable addition to the existing theory and contributes to revealing the true picture of deep learning. Beyond the proposed Gaussian process by depth, we theoretically characterize its uniform tightness property and the smallest eigenvalue of the Gaussian process kernel. These characterizations can not only enhance our understanding of the proposed depth-induced Gaussian process but also pave the way for future applications. Lastly, we examine the performance of the proposed Gaussian process by regression experiments on two benchmark data sets.

PDF Abstract