Bird's-Eye-View Panoptic Segmentation Using Monocular Frontal View Images

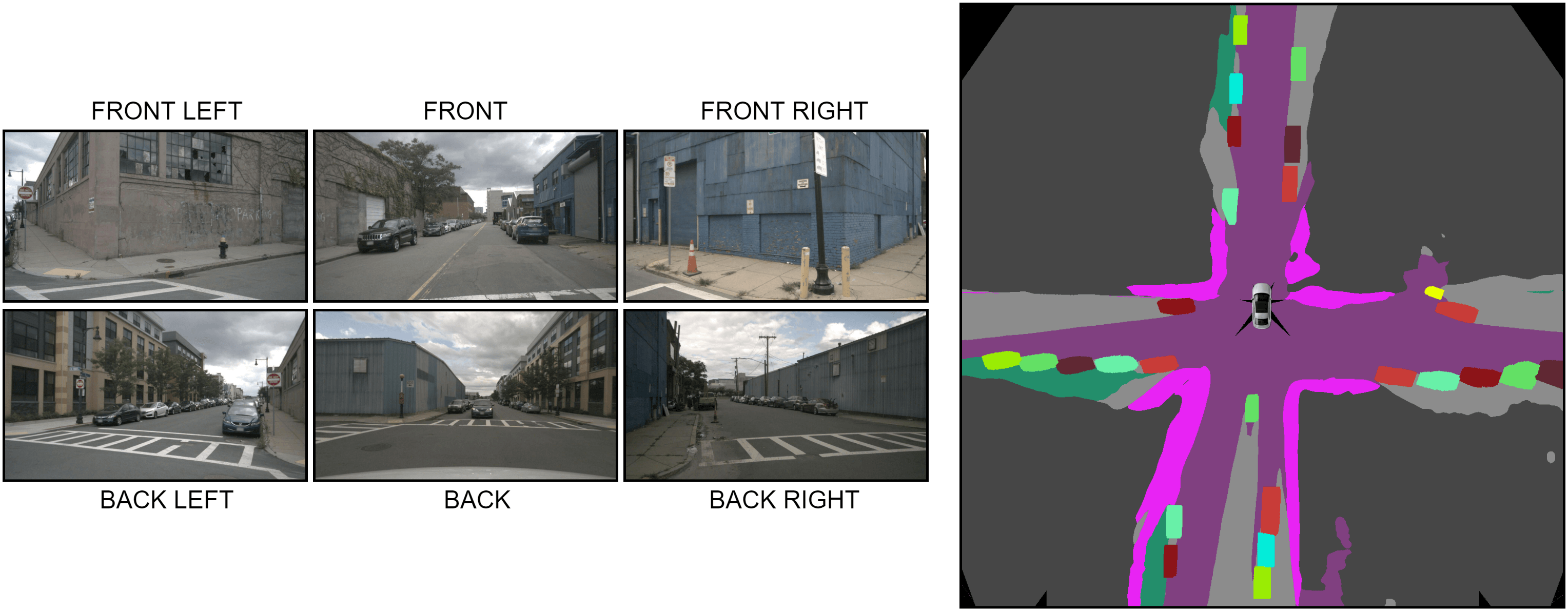

Bird's-Eye-View (BEV) maps have emerged as one of the most powerful representations for scene understanding due to their ability to provide rich spatial context while being easy to interpret and process. Such maps have found use in many real-world tasks that extensively rely on accurate scene segmentation as well as object instance identification in the BEV space for their operation. However, existing segmentation algorithms only predict the semantics in the BEV space, which limits their use in applications where the notion of object instances is also critical. In this work, we present the first BEV panoptic segmentation approach for directly predicting dense panoptic segmentation maps in the BEV, given a single monocular image in the frontal view (FV). Our architecture follows the top-down paradigm and incorporates a novel dense transformer module consisting of two distinct transformers that learn to independently map vertical and flat regions in the input image from the FV to the BEV. Additionally, we derive a mathematical formulation for the sensitivity of the FV-BEV transformation which allows us to intelligently weight pixels in the BEV space to account for the varying descriptiveness across the FV image. Extensive evaluations on the KITTI-360 and nuScenes datasets demonstrate that our approach exceeds the state-of-the-art in the PQ metric by 3.61 pp and 4.93 pp respectively.

PDF Abstract

nuScenes

nuScenes