Search Results for author: Yao Zhu

Found 19 papers, 7 papers with code

Advancing Out-of-Distribution Detection through Data Purification and Dynamic Activation Function Design

no code implementations • 6 Mar 2024 • Yingrui Ji, Yao Zhu, Zhigang Li, Jiansheng Chen, Yunlong Kong, Jingbo Chen

Our work addresses this challenge by enhancing the detection and management of OOD samples in neural networks.

Enhancing Few-shot CLIP with Semantic-Aware Fine-Tuning

no code implementations • 8 Nov 2023 • Yao Zhu, Yuefeng Chen, Wei Wang, Xiaofeng Mao, Xiu Yan, Yue Wang, Zhigang Li, Wang Lu, Jindong Wang, Xiangyang Ji

Hence, we propose fine-tuning the parameters of the attention pooling layer during the training process to encourage the model to focus on task-specific semantics.

COCO-O: A Benchmark for Object Detectors under Natural Distribution Shifts

1 code implementation • ICCV 2023 • Xiaofeng Mao, Yuefeng Chen, Yao Zhu, Da Chen, Hang Su, Rong Zhang, Hui Xue

To give a more comprehensive robustness assessment, we introduce COCO-O(ut-of-distribution), a test dataset based on COCO with 6 types of natural distribution shifts.

Green Steganalyzer: A Green Learning Approach to Image Steganalysis

no code implementations • 6 Jun 2023 • Yao Zhu, Xinyu Wang, Hong-Shuo Chen, Ronald Salloum, C. -C. Jay Kuo

A novel learning solution to image steganalysis based on the green learning paradigm, called Green Steganalyzer (GS), is proposed in this work.

SR-OOD: Out-of-Distribution Detection via Sample Repairing

no code implementations • 26 May 2023 • Rui Sun, Andi Zhang, Haiming Zhang, Jinke Ren, Yao Zhu, Ruimao Zhang, Shuguang Cui, Zhen Li

Specifically, our framework consists of two components: a sample repairing module and a detection module.

Generative Adversarial Network

Generative Adversarial Network

Out-of-Distribution Detection

+1

Out-of-Distribution Detection

+1

ImageNet-E: Benchmarking Neural Network Robustness via Attribute Editing

2 code implementations • CVPR 2023 • Xiaodan Li, Yuefeng Chen, Yao Zhu, Shuhui Wang, Rong Zhang, Hui Xue

We also evaluate some robust models including both adversarially trained models and other robust trained models and find that some models show worse robustness against attribute changes than vanilla models.

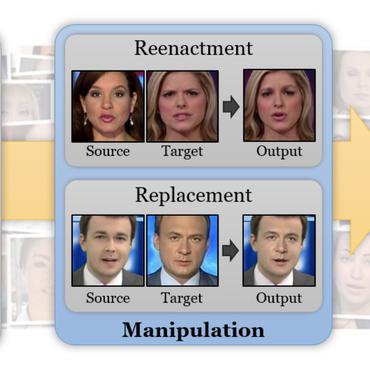

Information-containing Adversarial Perturbation for Combating Facial Manipulation Systems

no code implementations • 21 Mar 2023 • Yao Zhu, Yuefeng Chen, Xiaodan Li, Rong Zhang, Xiang Tian, Bolun Zheng, Yaowu Chen

We use an encoder to map a facial image and its identity message to a cross-model adversarial example which can disrupt multiple facial manipulation systems to achieve initiative protection.

Rethinking Out-of-Distribution Detection From a Human-Centric Perspective

no code implementations • 30 Nov 2022 • Yao Zhu, Yuefeng Chen, Xiaodan Li, Rong Zhang, Hui Xue, Xiang Tian, Rongxin Jiang, Bolun Zheng, Yaowu Chen

Additionally, our experiments demonstrate that model selection is non-trivial for OOD detection and should be considered as an integral of the proposed method, which differs from the claim in existing works that proposed methods are universal across different models.

Boosting Out-of-distribution Detection with Typical Features

no code implementations • 9 Oct 2022 • Yao Zhu, Yuefeng Chen, Chuanlong Xie, Xiaodan Li, Rong Zhang, Hui Xue, Xiang Tian, Bolun Zheng, Yaowu Chen

Out-of-distribution (OOD) detection is a critical task for ensuring the reliability and safety of deep neural networks in real-world scenarios.

Towards Understanding and Boosting Adversarial Transferability from a Distribution Perspective

2 code implementations • 9 Oct 2022 • Yao Zhu, Yuefeng Chen, Xiaodan Li, Kejiang Chen, Yuan He, Xiang Tian, Bolun Zheng, Yaowu Chen, Qingming Huang

We conduct comprehensive transferable attacks against multiple DNNs to demonstrate the effectiveness of the proposed method.

Enhance the Visual Representation via Discrete Adversarial Training

1 code implementation • 16 Sep 2022 • Xiaofeng Mao, Yuefeng Chen, Ranjie Duan, Yao Zhu, Gege Qi, Shaokai Ye, Xiaodan Li, Rong Zhang, Hui Xue

For borrowing the advantage from NLP-style AT, we propose Discrete Adversarial Training (DAT).

Ranked #1 on

Domain Generalization

on Stylized-ImageNet

Ranked #1 on

Domain Generalization

on Stylized-ImageNet

A-PixelHop: A Green, Robust and Explainable Fake-Image Detector

no code implementations • 7 Nov 2021 • Yao Zhu, Xinyu Wang, Hong-Shuo Chen, Ronald Salloum, C. -C. Jay Kuo

A novel method for detecting CNN-generated images, called Attentive PixelHop (or A-PixelHop), is proposed in this work.

Rethinking Adversarial Transferability from a Data Distribution Perspective

no code implementations • ICLR 2022 • Yao Zhu, Jiacheng Sun, Zhenguo Li

Adversarial transferability enables attackers to generate adversarial examples from the source model to attack the target model, which has raised security concerns about the deployment of DNNs in practice.

Towards Understanding the Generative Capability of Adversarially Robust Classifiers

no code implementations • ICCV 2021 • Yao Zhu, Jiacheng Ma, Jiacheng Sun, Zewei Chen, Rongxin Jiang, Zhenguo Li

We find that adversarial training contributes to obtaining an energy function that is flat and has low energy around the real data, which is the key for generative capability.

SAD: Saliency Adversarial Defense without Adversarial Training

no code implementations • 1 Jan 2021 • Yao Zhu, Jiacheng Sun, Zewei Chen, Zhenguo Li

We justify the algorithm with a linear model that the added saliency maps pull data away from its closest decision boundary.

Relation-Aware Neighborhood Matching Model for Entity Alignment

1 code implementation • 15 Dec 2020 • Yao Zhu, Hongzhi Liu, Zhonghai Wu, Yingpeng Du

Besides comparing neighbor nodes when matching neighborhood, we also try to explore useful information from the connected relations.

Representation Learning with Ordered Relation Paths for Knowledge Graph Completion

1 code implementation • IJCNLP 2019 • Yao Zhu, Hongzhi Liu, Zhonghai Wu, Yang song, Tao Zhang

Recently, a few methods take relation paths into consideration but pay less attention to the order of relations in paths which is important for reasoning.

Ranked #3 on

Link Prediction

on FB15k

(MR metric)

Ranked #3 on

Link Prediction

on FB15k

(MR metric)

An Interpretable Generative Model for Handwritten Digit Image Synthesis

no code implementations • 11 Nov 2018 • Yao Zhu, Saksham Suri, Pranav Kulkarni, Yueru Chen, Jiali Duan, C. -C. Jay Kuo

An interpretable generative model for handwritten digits synthesis is proposed in this work.

A Parallel Min-Cut Algorithm using Iteratively Reweighted Least Squares

1 code implementation • 13 Jan 2015 • Yao Zhu, David F. Gleich

We present a parallel algorithm for the undirected $s, t$-mincut problem with floating-point valued weights.

Distributed, Parallel, and Cluster Computing Data Structures and Algorithms Numerical Analysis