Search Results for author: Gautham Vasan

Found 6 papers, 4 papers with code

MaDi: Learning to Mask Distractions for Generalization in Visual Deep Reinforcement Learning

1 code implementation • 23 Dec 2023 • Bram Grooten, Tristan Tomilin, Gautham Vasan, Matthew E. Taylor, A. Rupam Mahmood, Meng Fang, Mykola Pechenizkiy, Decebal Constantin Mocanu

Our algorithm improves the agent's focus with useful masks, while its efficient Masker network only adds 0. 2% more parameters to the original structure, in contrast to previous work.

Correcting discount-factor mismatch in on-policy policy gradient methods

no code implementations • 23 Jun 2023 • Fengdi Che, Gautham Vasan, A. Rupam Mahmood

The policy gradient theorem gives a convenient form of the policy gradient in terms of three factors: an action value, a gradient of the action likelihood, and a state distribution involving discounting called the \emph{discounted stationary distribution}.

Real-Time Reinforcement Learning for Vision-Based Robotics Utilizing Local and Remote Computers

2 code implementations • 5 Oct 2022 • Yan Wang, Gautham Vasan, A. Rupam Mahmood

A common setup for a robotic agent is to have two different computers simultaneously: a resource-limited local computer tethered to the robot and a powerful remote computer connected wirelessly.

Autoregressive Policies for Continuous Control Deep Reinforcement Learning

1 code implementation • 27 Mar 2019 • Dmytro Korenkevych, A. Rupam Mahmood, Gautham Vasan, James Bergstra

We introduce a family of stationary autoregressive (AR) stochastic processes to facilitate exploration in continuous control domains.

Benchmarking Reinforcement Learning Algorithms on Real-World Robots

2 code implementations • 20 Sep 2018 • A. Rupam Mahmood, Dmytro Korenkevych, Gautham Vasan, William Ma, James Bergstra

The research community is now able to reproduce, analyze and build quickly on these results due to open source implementations of learning algorithms and simulated benchmark tasks.

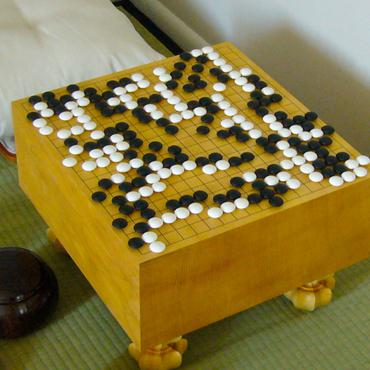

Neurohex: A Deep Q-learning Hex Agent

no code implementations • 24 Apr 2016 • Kenny Young, Ryan Hayward, Gautham Vasan

DeepMind's recent spectacular success in using deep convolutional neural nets and machine learning to build superhuman level agents --- e. g. for Atari games via deep Q-learning and for the game of Go via Reinforcement Learning --- raises many questions, including to what extent these methods will succeed in other domains.