Search Results for author: Chengli Tan

Found 5 papers, 2 papers with code

Stabilizing Sharpness-aware Minimization Through A Simple Renormalization Strategy

no code implementations • 14 Jan 2024 • Chengli Tan, Jiangshe Zhang, Junmin Liu, Yicheng Wang, Yunda Hao

Recently, sharpness-aware minimization (SAM) has attracted a lot of attention because of its surprising effectiveness in improving generalization performance. However, training neural networks with SAM can be highly unstable since the loss does not decrease along the direction of the exact gradient at the current point, but instead follows the direction of a surrogate gradient evaluated at another point nearby.

Seismic Data Interpolation based on Denoising Diffusion Implicit Models with Resampling

no code implementations • 9 Jul 2023 • Xiaoli Wei, Chunxia Zhang, Hongtao Wang, Chengli Tan, Deng Xiong, Baisong Jiang, Jiangshe Zhang, Sang-Woon Kim

The model training is established on the denoising diffusion probabilistic model, where U-Net is equipped with the multi-head self-attention to match the noise in each step.

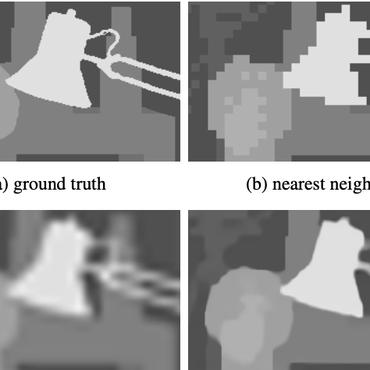

Spherical Space Feature Decomposition for Guided Depth Map Super-Resolution

no code implementations • ICCV 2023 • Zixiang Zhao, Jiangshe Zhang, Xiang Gu, Chengli Tan, Shuang Xu, Yulun Zhang, Radu Timofte, Luc van Gool

Then, the extracted features are mapped to the spherical space to complete the separation of private features and the alignment of shared features.

Trajectory-dependent Generalization Bounds for Deep Neural Networks via Fractional Brownian Motion

1 code implementation • 9 Jun 2022 • Chengli Tan, Jiangshe Zhang, Junmin Liu

In this study, we argue that the hypothesis set SGD explores is trajectory-dependent and thus may provide a tighter bound over its Rademacher complexity.

Understanding Short-Range Memory Effects in Deep Neural Networks

1 code implementation • 5 May 2021 • Chengli Tan, Jiangshe Zhang, Junmin Liu

Instead, inspired by the short-range correlation emerging in the SGN series, we propose that SGD can be viewed as a discretization of an SDE driven by fractional Brownian motion (FBM).