Sound Classification

46 papers with code • 0 benchmarks • 2 datasets

Benchmarks

These leaderboards are used to track progress in Sound Classification

Most implemented papers

Urban Sound Tagging using Convolutional Neural Networks

The proposed model uses log-scaled Mel-spectrogram as the representation format for the audio data.

Spectrogram-frame linear network and continuous frame sequence for bird sound classification

Inspired by that bird sound has various frequency distributions and continuous time-varying properties, a novel method is proposed for the classification of bird sound based on continuous frame sequence and spectrogram-frame linear network (SFLN).

LungBRN: A Smart Digital Stethoscope for Detecting Respiratory Disease Using bi-ResNet Deep Learning Algorithm

Improving access to health care services for the medically under-served population is vital to ensure that critical illness can be addressed immediately.

ESResNet: Environmental Sound Classification Based on Visual Domain Models

Environmental Sound Classification (ESC) is an active research area in the audio domain and has seen a lot of progress in the past years.

Deep Neural Network for Respiratory Sound Classification in Wearable Devices Enabled by Patient Specific Model Tuning

We also implement a patient specific model tuning strategy that first screens respiratory patients and then builds patient specific classification models using limited patient data for reliable anomaly detection.

Coswara -- A Database of Breathing, Cough, and Voice Sounds for COVID-19 Diagnosis

We believe that insights from analysis of Coswara can be effective in enabling sound based technology solutions for point-of-care diagnosis of respiratory infection, and in the near future this can help to diagnose COVID-19.

Urban Sound Classification : striving towards a fair comparison

Sometimes authors copy-pasting the results of the original papers which is not helping reproducibility.

Comparison of semi-supervised deep learning algorithms for audio classification

In all but one cases, MM, RMM, and FM outperformed MT and DCT significantly, MM and RMM being the best methods in most experiments.

SoundCLR: Contrastive Learning of Representations For Improved Environmental Sound Classification

Our extensive benchmark experiments show that our hybrid deep network models trained with combined contrastive and cross-entropy loss achieved the state-of-the-art performance on three benchmark datasets ESC-10, ESC-50, and US8K with validation accuracies of 99. 75\%, 93. 4\%, and 86. 49\% respectively.

Environmental Sound Classification on the Edge: A Pipeline for Deep Acoustic Networks on Extremely Resource-Constrained Devices

Significant efforts are being invested to bring state-of-the-art classification and recognition to edge devices with extreme resource constraints (memory, speed, and lack of GPU support).

InfantMarmosetsVox

InfantMarmosetsVox

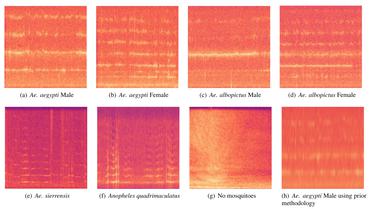

Audio de mosquitos Aedes Aegypti

Audio de mosquitos Aedes Aegypti