Scene Graph Generation

110 papers with code • 5 benchmarks • 7 datasets

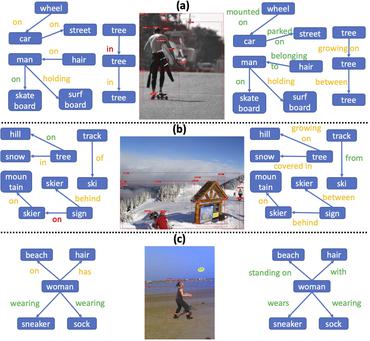

A scene graph is a structured representation of an image, where nodes in a scene graph correspond to object bounding boxes with their object categories, and edges correspond to their pairwise relationships between objects. The task of Scene Graph Generation is to generate a visually-grounded scene graph that most accurately correlates with an image.

Libraries

Use these libraries to find Scene Graph Generation models and implementationsMost implemented papers

RLIPv2: Fast Scaling of Relational Language-Image Pre-training

In this paper, we propose RLIPv2, a fast converging model that enables the scaling of relational pre-training to large-scale pseudo-labelled scene graph data.

Panoptic Video Scene Graph Generation

PVSG relates to the existing video scene graph generation (VidSGG) problem, which focuses on temporal interactions between humans and objects grounded with bounding boxes in videos.

Visual Graphs from Motion (VGfM): Scene understanding with object geometry reasoning

Recent approaches on visual scene understanding attempt to build a scene graph -- a computational representation of objects and their pairwise relationships.

Relation Transformer Network

In this work, we propose a novel transformer formulation for scene graph generation and relation prediction.

Learning Visual Commonsense for Robust Scene Graph Generation

Scene graph generation models understand the scene through object and predicate recognition, but are prone to mistakes due to the challenges of perception in the wild.

Learning and Reasoning with the Graph Structure Representation in Robotic Surgery

Learning to infer graph representations and performing spatial reasoning in a complex surgical environment can play a vital role in surgical scene understanding in robotic surgery.

SceneGraphFusion: Incremental 3D Scene Graph Prediction from RGB-D Sequences

Scene graphs are a compact and explicit representation successfully used in a variety of 2D scene understanding tasks.

Fine-Grained Scene Graph Generation with Data Transfer

Scene graph generation (SGG) is designed to extract (subject, predicate, object) triplets in images.

Scene Graph Generation from Objects, Phrases and Region Captions

Object detection, scene graph generation and region captioning, which are three scene understanding tasks at different semantic levels, are tied together: scene graphs are generated on top of objects detected in an image with their pairwise relationship predicted, while region captioning gives a language description of the objects, their attributes, relations, and other context information.

Mapping Images to Scene Graphs with Permutation-Invariant Structured Prediction

Machine understanding of complex images is a key goal of artificial intelligence.

MS COCO

MS COCO

Visual Genome

Visual Genome

VRD

VRD

3DSSG

3DSSG

3RScan

3RScan

PSG Dataset

PSG Dataset

4D-OR

4D-OR