RGB Salient Object Detection

97 papers with code • 13 benchmarks • 17 datasets

RGB Salient object detection is a task-based on a visual attention mechanism, in which algorithms aim to explore objects or regions more attentive than the surrounding areas on the scene or RGB images.

( Image credit: Attentive Feedback Network for Boundary-Aware Salient Object Detection )

Libraries

Use these libraries to find RGB Salient Object Detection models and implementationsLatest papers with no code

C$^{4}$Net: Contextual Compression and Complementary Combination Network for Salient Object Detection

Deep learning solutions of the salient object detection problem have achieved great results in recent years.

Saliency Detection via Global Context Enhanced Feature Fusion and Edge Weighted Loss

1) Indiscriminately integrating the encoder feature, which contains spatial information for multiple objects, and the decoder feature, which contains global information of the salient object, is likely to convey unnecessary details of non-salient objects to the decoder, hindering saliency detection.

DyStaB: Unsupervised Object Segmentation via Dynamic-Static Bootstrapping

Then, it uses the segments to learn object models that can be used for detection in a static image.

Rethinking of the Image Salient Object Detection: Object-level Semantic Saliency Re-ranking First, Pixel-wise Saliency Refinement Latter

In sharp contrast to the state-of-the-art (SOTA) methods that focus on learning pixel-wise saliency in "single image" using perceptual clues mainly, our method has investigated the "object-level semantic ranks between multiple images", of which the methodology is more consistent with the real human attention mechanism.

A Deeper Look at Salient Object Detection: Bi-stream Network with a Small Training Dataset

Compared with the conventional hand-crafted approaches, the deep learning based methods have achieved tremendous performance improvements by training exquisitely crafted fancy networks over large-scale training sets.

Learning Discriminative Feature with CRF for Unsupervised Video Object Segmentation

In this paper, we introduce a novel network, called discriminative feature network (DFNet), to address the unsupervised video object segmentation task.

Exploring Image Enhancement for Salient Object Detection in Low Light Images

Low light images captured in a non-uniform illumination environment usually are degraded with the scene depth and the corresponding environment lights.

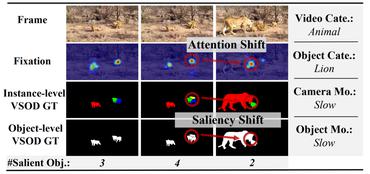

TENet: Triple Excitation Network for Video Salient Object Detection

In this paper, we propose a simple yet effective approach, named Triple Excitation Network, to reinforce the training of video salient object detection (VSOD) from three aspects, spatial, temporal, and online excitations.

Multi-level Cross-modal Interaction Network for RGB-D Salient Object Detection

Our MCI-Net includes two key components: 1) a cross-modal feature learning network, which is used to learn the high-level features for the RGB images and depth cues, effectively enabling the correlations between the two sources to be exploited; and 2) a multi-level interactive integration network, which integrates multi-level cross-modal features to boost the SOD performance.

Salient Object Detection Combining a Self-attention Module and a Feature Pyramid Network

Salient object detection has achieved great improvement by using the Fully Convolution Network (FCN).

PASCAL-S

PASCAL-S

DUTS

DUTS

HKU-IS

HKU-IS

DUT-OMRON

DUT-OMRON

ISTD

ISTD

SBU

SBU

ECSSD

ECSSD

SOC

SOC

VT5000

VT5000

HRSOD

HRSOD