RGB Salient Object Detection

97 papers with code • 13 benchmarks • 17 datasets

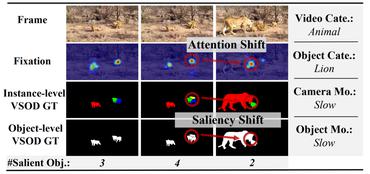

RGB Salient object detection is a task-based on a visual attention mechanism, in which algorithms aim to explore objects or regions more attentive than the surrounding areas on the scene or RGB images.

( Image credit: Attentive Feedback Network for Boundary-Aware Salient Object Detection )

Libraries

Use these libraries to find RGB Salient Object Detection models and implementationsMost implemented papers

Res2Net: A New Multi-scale Backbone Architecture

We evaluate the Res2Net block on all these models and demonstrate consistent performance gains over baseline models on widely-used datasets, e. g., CIFAR-100 and ImageNet.

U$^2$-Net: Going Deeper with Nested U-Structure for Salient Object Detection

In this paper, we design a simple yet powerful deep network architecture, U$^2$-Net, for salient object detection (SOD).

RGB-D Salient Object Detection: A Survey

Further, considering that the light field can also provide depth maps, we review SOD models and popular benchmark datasets from this domain as well.

EGNet: Edge Guidance Network for Salient Object Detection

In the second step, we integrate the local edge information and global location information to obtain the salient edge features.

A Simple Pooling-Based Design for Real-Time Salient Object Detection

We further design a feature aggregation module (FAM) to make the coarse-level semantic information well fused with the fine-level features from the top-down pathway.

Deeply supervised salient object detection with short connections

Recent progress on saliency detection is substantial, benefiting mostly from the explosive development of Convolutional Neural Networks (CNNs).

F3Net: Fusion, Feedback and Focus for Salient Object Detection

Furthermore, different from binary cross entropy, the proposed PPA loss doesn't treat pixels equally, which can synthesize the local structure information of a pixel to guide the network to focus more on local details.

Uncertainty Inspired RGB-D Saliency Detection

Our framework includes two main models: 1) a generator model, which maps the input image and latent variable to stochastic saliency prediction, and 2) an inference model, which gradually updates the latent variable by sampling it from the true or approximate posterior distribution.

P2T: Pyramid Pooling Transformer for Scene Understanding

A popular solution to this problem is to use a single pooling operation to reduce the sequence length.

Reverse Attention for Salient Object Detection

Benefit from the quick development of deep learning techniques, salient object detection has achieved remarkable progresses recently.

PASCAL-S

PASCAL-S

DUTS

DUTS

HKU-IS

HKU-IS

DUT-OMRON

DUT-OMRON

ISTD

ISTD

SBU

SBU

ECSSD

ECSSD

SOC

SOC

VT5000

VT5000

HRSOD

HRSOD