Multimodal Unsupervised Image-To-Image Translation

14 papers with code • 6 benchmarks • 4 datasets

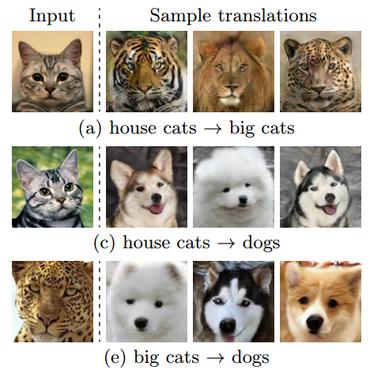

Multimodal unsupervised image-to-image translation is the task of producing multiple translations to one domain from a single image in another domain.

( Image credit: MUNIT: Multimodal UNsupervised Image-to-image Translation )

Libraries

Use these libraries to find Multimodal Unsupervised Image-To-Image Translation models and implementationsLatest papers

Multimodal Unsupervised Image-to-Image Translation

To translate an image to another domain, we recombine its content code with a random style code sampled from the style space of the target domain.

In2I : Unsupervised Multi-Image-to-Image Translation Using Generative Adversarial Networks

In unsupervised image-to-image translation, the goal is to learn the mapping between an input image and an output image using a set of unpaired training images.

Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks

Image-to-image translation is a class of vision and graphics problems where the goal is to learn the mapping between an input image and an output image using a training set of aligned image pairs.

Unsupervised Image-to-Image Translation Networks

Unsupervised image-to-image translation aims at learning a joint distribution of images in different domains by using images from the marginal distributions in individual domains.

CelebA-HQ

CelebA-HQ

AFHQ

AFHQ

CATS

CATS

FFHQ-Aging

FFHQ-Aging