Multimodal Unsupervised Image-To-Image Translation

14 papers with code • 6 benchmarks • 4 datasets

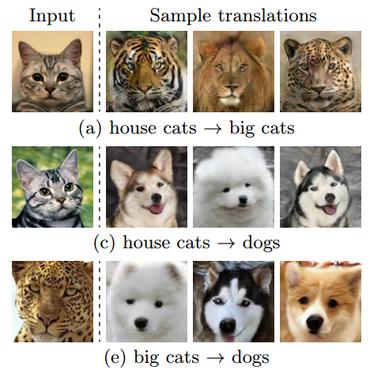

Multimodal unsupervised image-to-image translation is the task of producing multiple translations to one domain from a single image in another domain.

( Image credit: MUNIT: Multimodal UNsupervised Image-to-image Translation )

Libraries

Use these libraries to find Multimodal Unsupervised Image-To-Image Translation models and implementationsLatest papers

Wavelet-based Unsupervised Label-to-Image Translation

Semantic Image Synthesis (SIS) is a subclass of image-to-image translation where a semantic layout is used to generate a photorealistic image.

A Style-aware Discriminator for Controllable Image Translation

Current image-to-image translations do not control the output domain beyond the classes used during training, nor do they interpolate between different domains well, leading to implausible results.

Image-to-image Translation via Hierarchical Style Disentanglement

Recently, image-to-image translation has made significant progress in achieving both multi-label (\ie, translation conditioned on different labels) and multi-style (\ie, generation with diverse styles) tasks.

Breaking the Cycle - Colleagues Are All You Need

(2) Since it does not need to support the cycle constraint, no irrelevant traces of the input are left on the generated image.

Lifespan Age Transformation Synthesis

Most existing aging methods are limited to changing the texture, overlooking transformations in head shape that occur during the human aging and growth process.

High-Resolution Daytime Translation Without Domain Labels

We present the high-resolution daytime translation (HiDT) model for this task.

StarGAN v2: Diverse Image Synthesis for Multiple Domains

A good image-to-image translation model should learn a mapping between different visual domains while satisfying the following properties: 1) diversity of generated images and 2) scalability over multiple domains.

Breaking the cycle -- Colleagues are all you need

(2) Since it does not need to support the cycle constraint, no irrelevant traces of the input are left on the generated image.

Mode Seeking Generative Adversarial Networks for Diverse Image Synthesis

In this work, we propose a simple yet effective regularization term to address the mode collapse issue for cGANs.

Diverse Image-to-Image Translation via Disentangled Representations

Our model takes the encoded content features extracted from a given input and the attribute vectors sampled from the attribute space to produce diverse outputs at test time.

CelebA-HQ

CelebA-HQ

AFHQ

AFHQ

CATS

CATS

FFHQ-Aging

FFHQ-Aging