What makes fake images detectable? Understanding properties that generalize

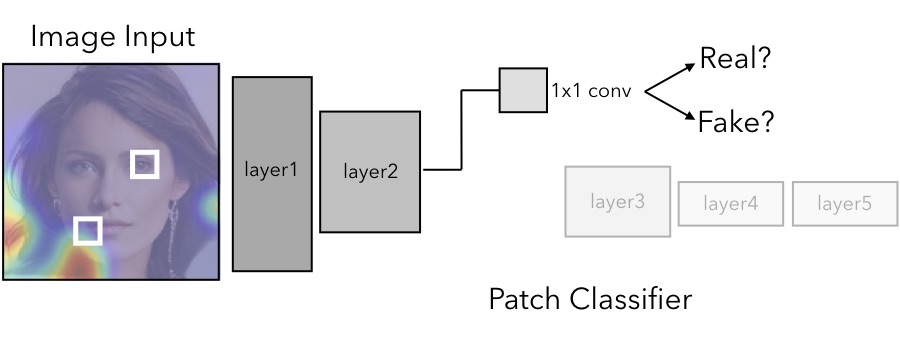

The quality of image generation and manipulation is reaching impressive levels, making it increasingly difficult for a human to distinguish between what is real and what is fake. However, deep networks can still pick up on the subtle artifacts in these doctored images. We seek to understand what properties of fake images make them detectable and identify what generalizes across different model architectures, datasets, and variations in training. We use a patch-based classifier with limited receptive fields to visualize which regions of fake images are more easily detectable. We further show a technique to exaggerate these detectable properties and demonstrate that, even when the image generator is adversarially finetuned against a fake image classifier, it is still imperfect and leaves detectable artifacts in certain image patches. Code is available at https://chail.github.io/patch-forensics/.

PDF Abstract ECCV 2020 PDF ECCV 2020 Abstract

CelebA

CelebA

FFHQ

FFHQ

CelebA-HQ

CelebA-HQ

FaceForensics++

FaceForensics++