VRMN-bD: A Multi-modal Natural Behavior Dataset of Immersive Human Fear Responses in VR Stand-up Interactive Games

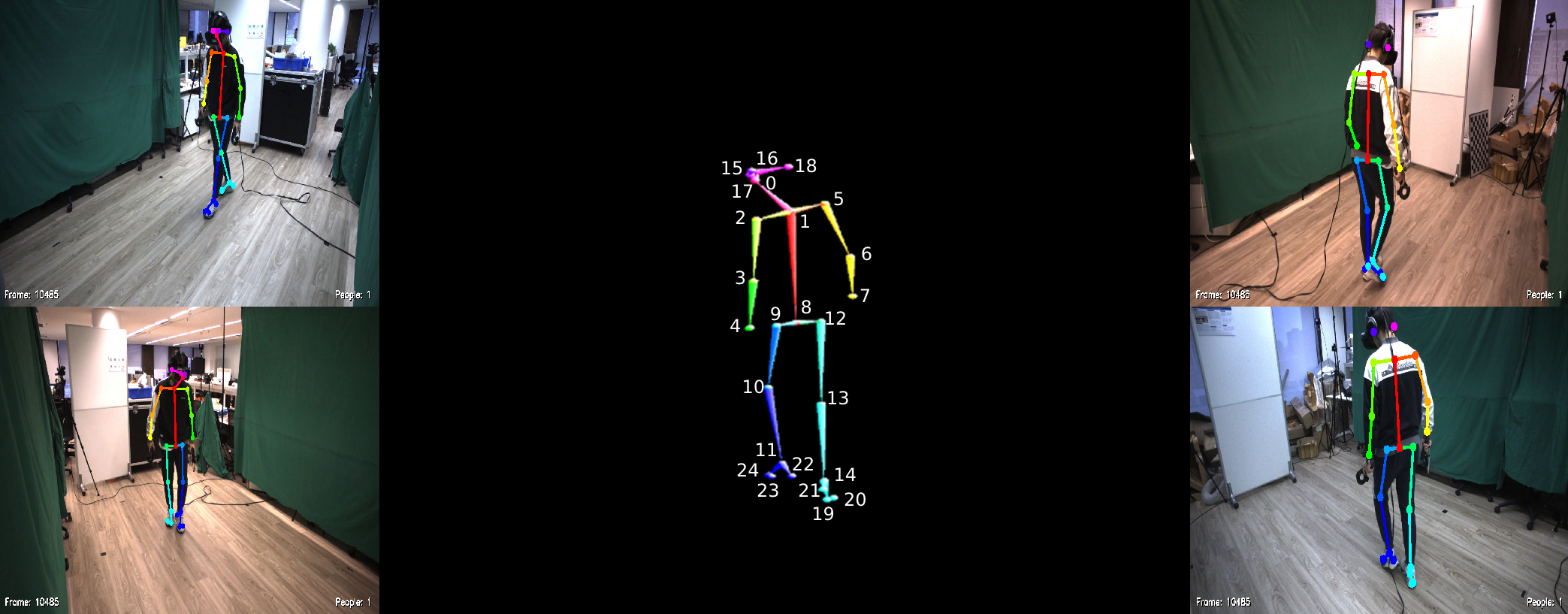

Understanding and recognizing emotions are important and challenging issues in the metaverse era. Understanding, identifying, and predicting fear, which is one of the fundamental human emotions, in virtual reality (VR) environments plays an essential role in immersive game development, scene development, and next-generation virtual human-computer interaction applications. In this article, we used VR horror games as a medium to analyze fear emotions by collecting multi-modal data (posture, audio, and physiological signals) from 23 players. We used an LSTM-based model to predict fear with accuracies of 65.31% and 90.47% under 6-level classification (no fear and five different levels of fear) and 2-level classification (no fear and fear), respectively. We constructed a multi-modal natural behavior dataset of immersive human fear responses (VRMN-bD) and compared it with existing relevant advanced datasets. The results show that our dataset has fewer limitations in terms of collection method, data scale and audience scope. We are unique and advanced in targeting multi-modal datasets of fear and behavior in VR stand-up interactive environments. Moreover, we discussed the implications of this work for communities and applications. The dataset and pre-trained model are available at https://github.com/KindOPSTAR/VRMN-bD.

PDF Abstract