The Mind's Eye: Visualizing Class-Agnostic Features of CNNs

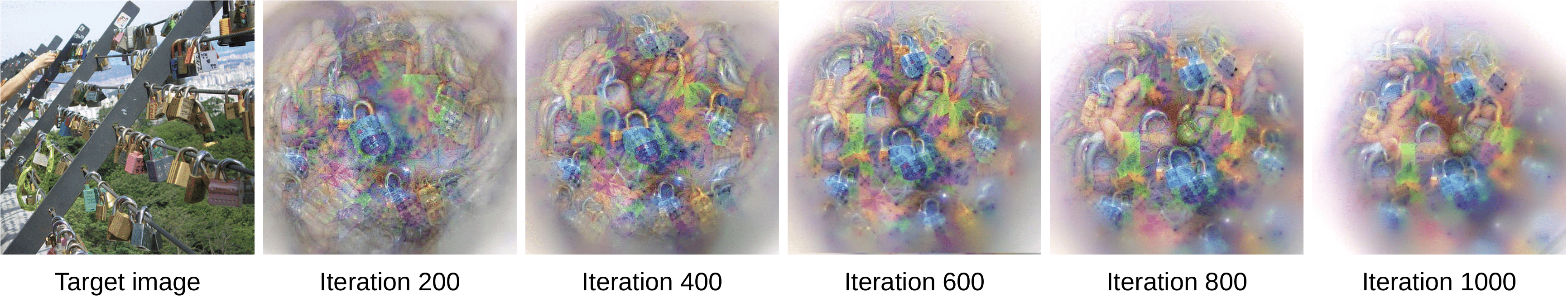

Visual interpretability of Convolutional Neural Networks (CNNs) has gained significant popularity because of the great challenges that CNN complexity imposes to understanding their inner workings. Although many techniques have been proposed to visualize class features of CNNs, most of them do not provide a correspondence between inputs and the extracted features in specific layers. This prevents the discovery of stimuli that each layer responds better to. We propose an approach to visually interpret CNN features given a set of images by creating corresponding images that depict the most informative features of a specific layer. Exploring features in this class-agnostic manner allows for a greater focus on the feature extractor of CNNs. Our method uses a dual-objective activation maximization and distance minimization loss, without requiring a generator network nor modifications to the original model. This limits the number of FLOPs to that of the original network. We demonstrate the visualization quality on widely-used architectures.

PDF Abstract

ImageNet

ImageNet