TedEval: A Fair Evaluation Metric for Scene Text Detectors

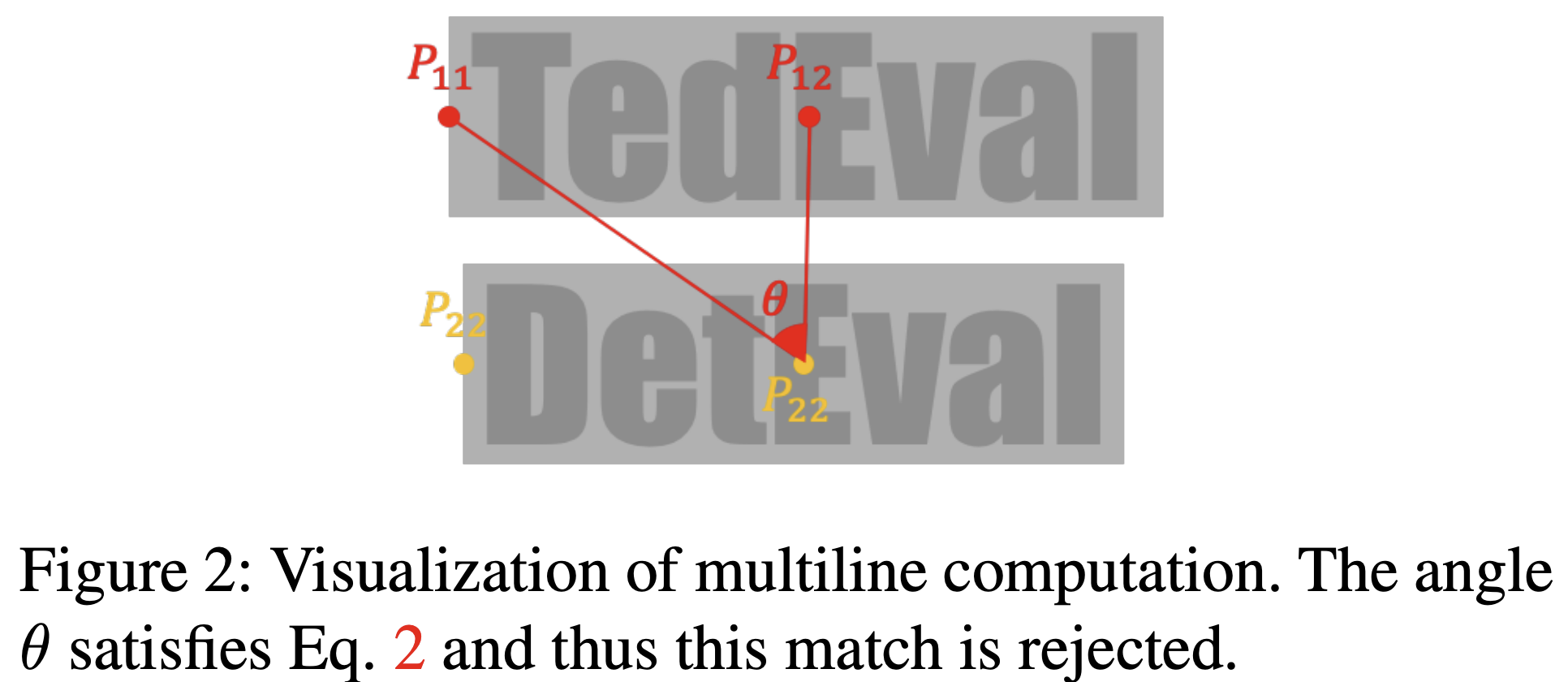

Despite the recent success of scene text detection methods, common evaluation metrics fail to provide a fair and reliable comparison among detectors. They have obvious drawbacks in reflecting the inherent characteristic of text detection tasks, unable to address issues such as granularity, multiline, and character incompleteness. In this paper, we propose a novel evaluation protocol called TedEval (Text detector Evaluation), which evaluates text detections by an instance-level matching and a character-level scoring. Based on a firm standard rewarding behaviors that result in successful recognition, TedEval can act as a reliable standard for comparing and quantizing the detection quality throughout all difficulty levels. In this regard, we believe that TedEval can play a key role in developing state-of-the-art scene text detectors. The code is publicly available at https://github.com/clovaai/TedEval.

PDF Abstract