TEA: Test-time Energy Adaptation

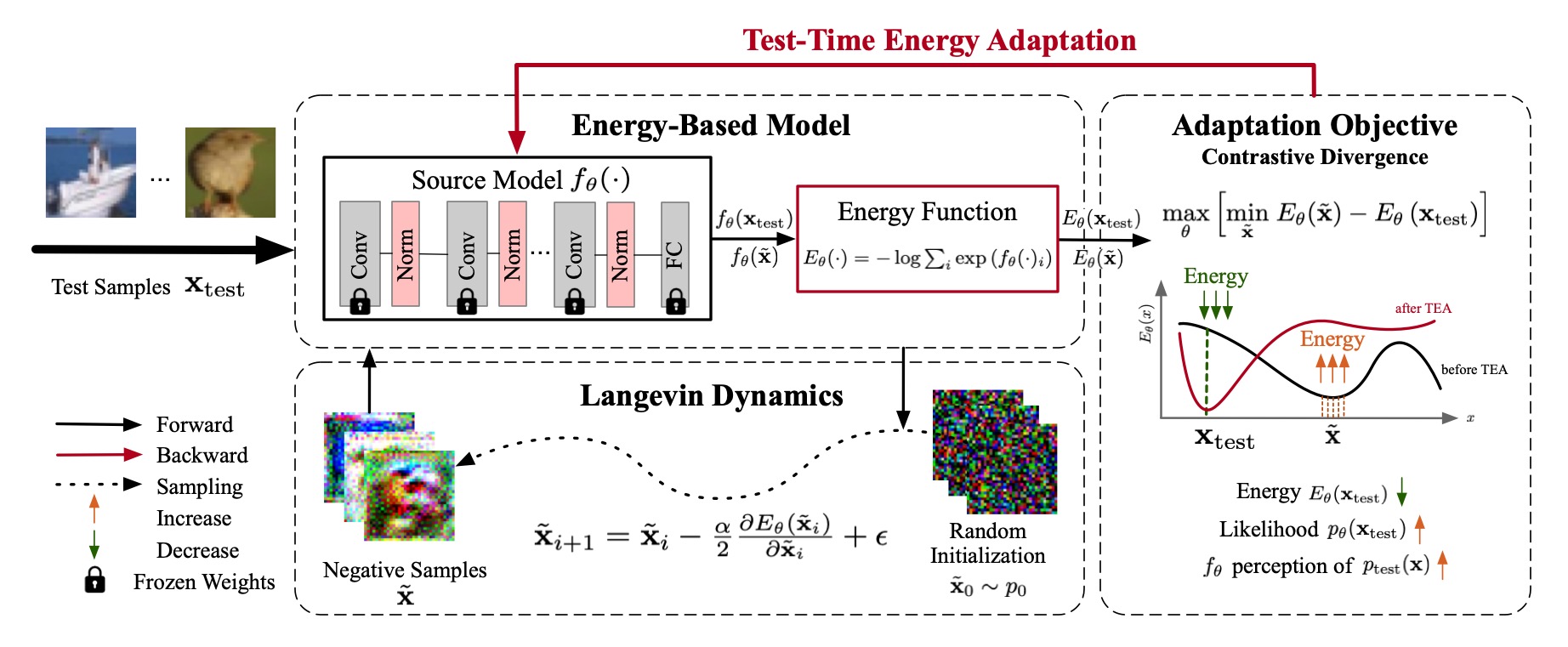

Test-time adaptation (TTA) aims to improve model generalizability when test data diverges from training distribution, offering the distinct advantage of not requiring access to training data and processes, especially valuable in the context of large pre-trained models. However, current TTA methods fail to address the fundamental issue: covariate shift, i.e., the decreased generalizability can be attributed to the model's reliance on the marginal distribution of the training data, which may impair model calibration and introduce confirmation bias. To address this, we propose a novel energy-based perspective, enhancing the model's perception of target data distributions without requiring access to training data or processes. Building on this perspective, we introduce $\textbf{T}$est-time $\textbf{E}$nergy $\textbf{A}$daptation ($\textbf{TEA}$), which transforms the trained classifier into an energy-based model and aligns the model's distribution with the test data's, enhancing its ability to perceive test distributions and thus improving overall generalizability. Extensive experiments across multiple tasks, benchmarks and architectures demonstrate TEA's superior generalization performance against state-of-the-art methods. Further in-depth analyses reveal that TEA can equip the model with a comprehensive perception of test distribution, ultimately paving the way toward improved generalization and calibration.

PDF Abstract

CIFAR-10

CIFAR-10

CIFAR-100

CIFAR-100

Tiny ImageNet

Tiny ImageNet

PACS

PACS

ImageNet-C

ImageNet-C