Task-Aware Machine Unlearning and Its Application in Load Forecasting

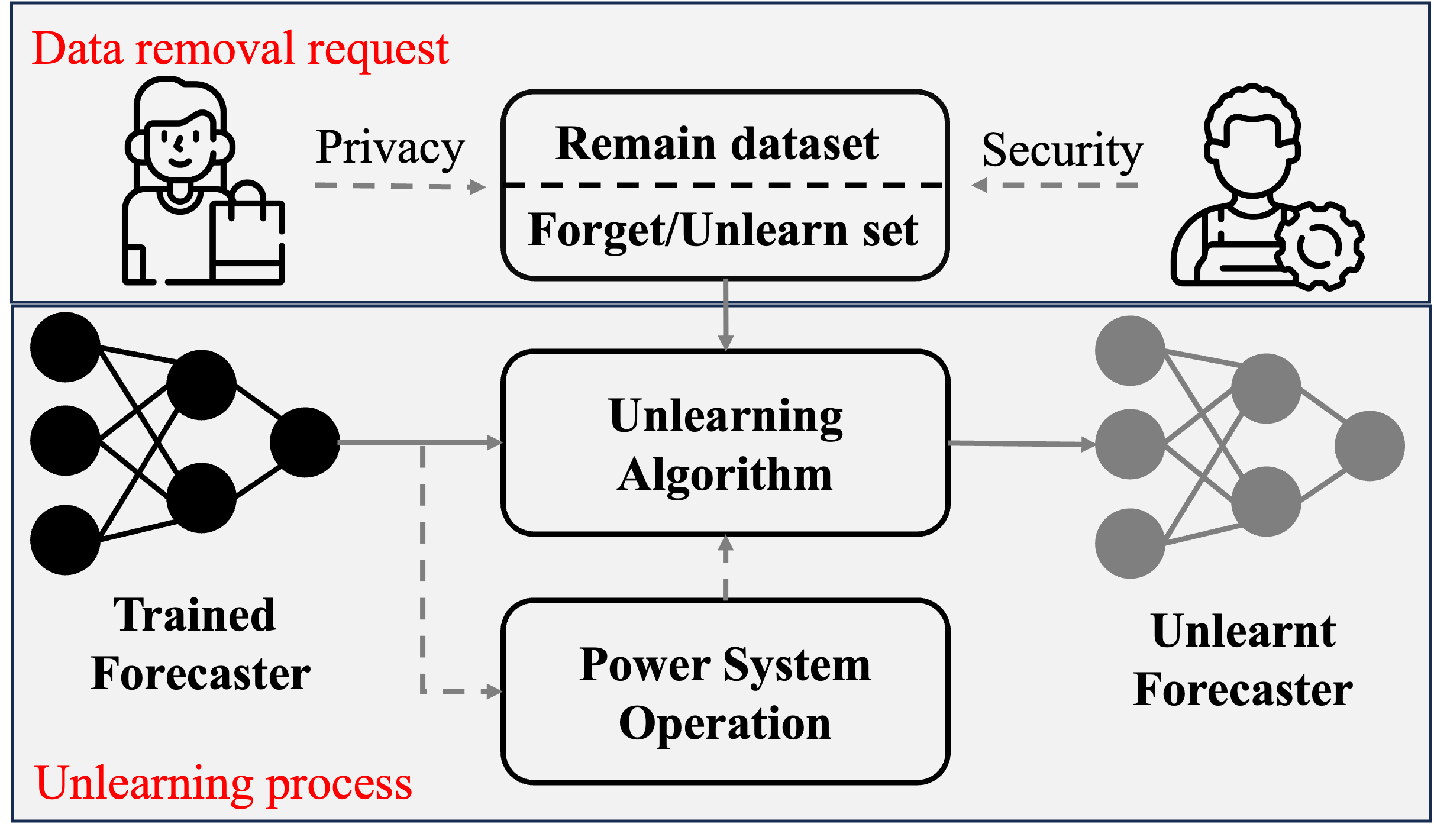

Data privacy and security have become a non-negligible factor in load forecasting. Previous researches mainly focus on training stage enhancement. However, once the model is trained and deployed, it may need to `forget' (i.e., remove the impact of) part of training data if the these data are found to be malicious or as requested by the data owner. This paper introduces the concept of machine unlearning which is specifically designed to remove the influence of part of the dataset on an already trained forecaster. However, direct unlearning inevitably degrades the model generalization ability. To balance between unlearning completeness and model performance, a performance-aware algorithm is proposed by evaluating the sensitivity of local model parameter change using influence function and sample re-weighting. Furthermore, we observe that the statistical criterion such as mean squared error, cannot fully reflect the operation cost of the downstream tasks in power system. Therefore, a task-aware machine unlearning is proposed whose objective is a trilevel optimization with dispatch and redispatch problems considered. We theoretically prove the existence of the gradient of such an objective, which is key to re-weighting the remaining samples. We tested the unlearning algorithms on linear, CNN, and MLP-Mixer based load forecasters with a realistic load dataset. The simulation demonstrates the balance between unlearning completeness and operational cost. All codes can be found at https://github.com/xuwkk/task_aware_machine_unlearning.

PDF Abstract