Switchable Self-attention Module

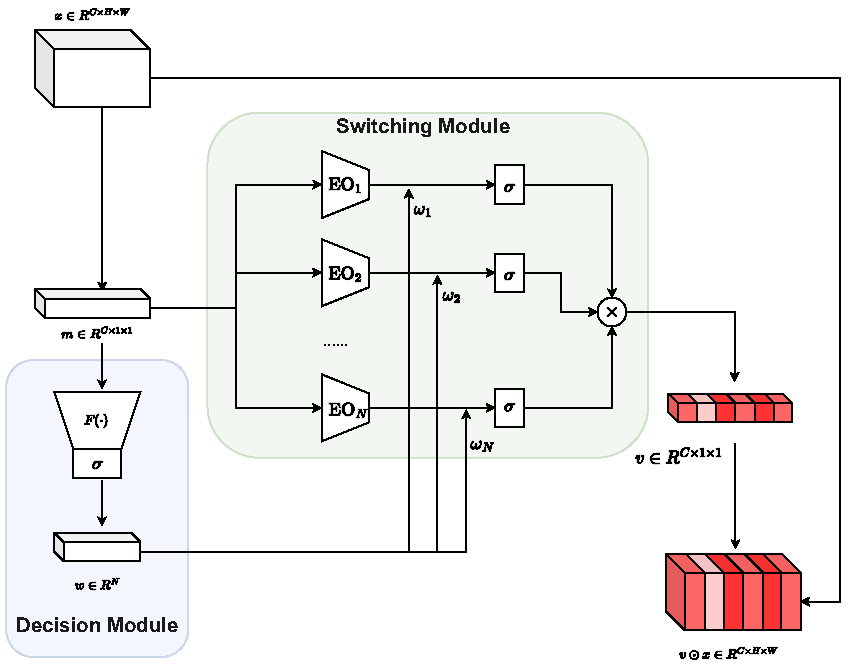

Attention mechanism has gained great success in vision recognition. Many works are devoted to improving the effectiveness of attention mechanism, which finely design the structure of the attention operator. These works need lots of experiments to pick out the optimal settings when scenarios change, which consumes a lot of time and computational resources. In addition, a neural network often contains many network layers, and most studies often use the same attention module to enhance different network layers, which hinders the further improvement of the performance of the self-attention mechanism. To address the above problems, we propose a self-attention module SEM. Based on the input information of the attention module and alternative attention operators, SEM can automatically decide to select and integrate attention operators to compute attention maps. The effectiveness of SEM is demonstrated by extensive experiments on widely used benchmark datasets and popular self-attention networks.

PDF Abstract

CIFAR-10

CIFAR-10

CIFAR-100

CIFAR-100