SHOT-VAE: Semi-supervised Deep Generative Models With Label-aware ELBO Approximations

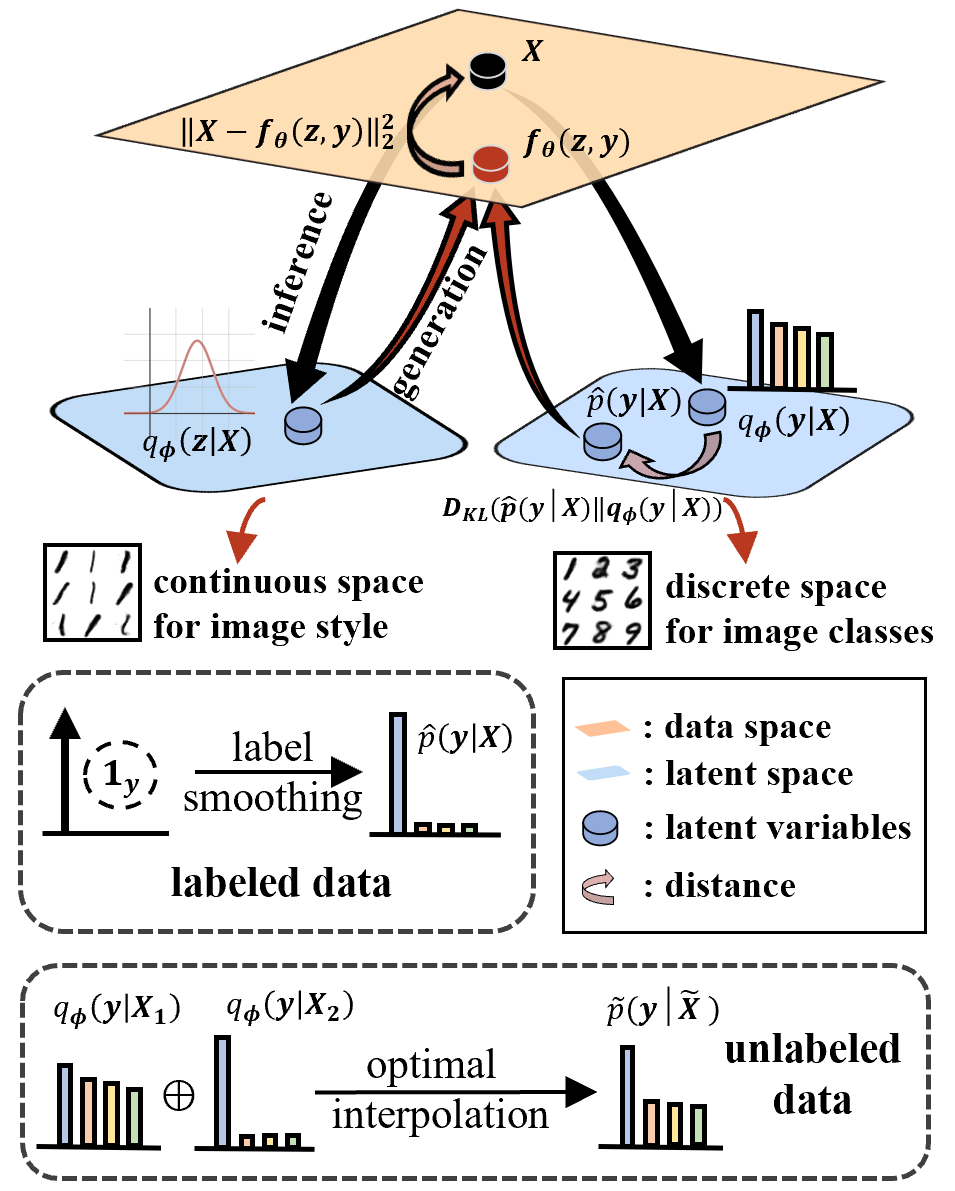

Semi-supervised variational autoencoders (VAEs) have obtained strong results, but have also encountered the challenge that good ELBO values do not always imply accurate inference results. In this paper, we investigate and propose two causes of this problem: (1) The ELBO objective cannot utilize the label information directly. (2) A bottleneck value exists and continuing to optimize ELBO after this value will not improve inference accuracy. On the basis of the experiment results, we propose SHOT-VAE to address these problems without introducing additional prior knowledge. The SHOT-VAE offers two contributions: (1) A new ELBO approximation named smooth-ELBO that integrates the label predictive loss into ELBO. (2) An approximation based on optimal interpolation that breaks the ELBO value bottleneck by reducing the margin between ELBO and the data likelihood. The SHOT-VAE achieves good performance with a 25.30% error rate on CIFAR-100 with 10k labels and reduces the error rate to 6.11% on CIFAR-10 with 4k labels.

PDF Abstract

CIFAR-10

CIFAR-10

CIFAR-100

CIFAR-100