Self-Supervised Multimodal Learning: A Survey

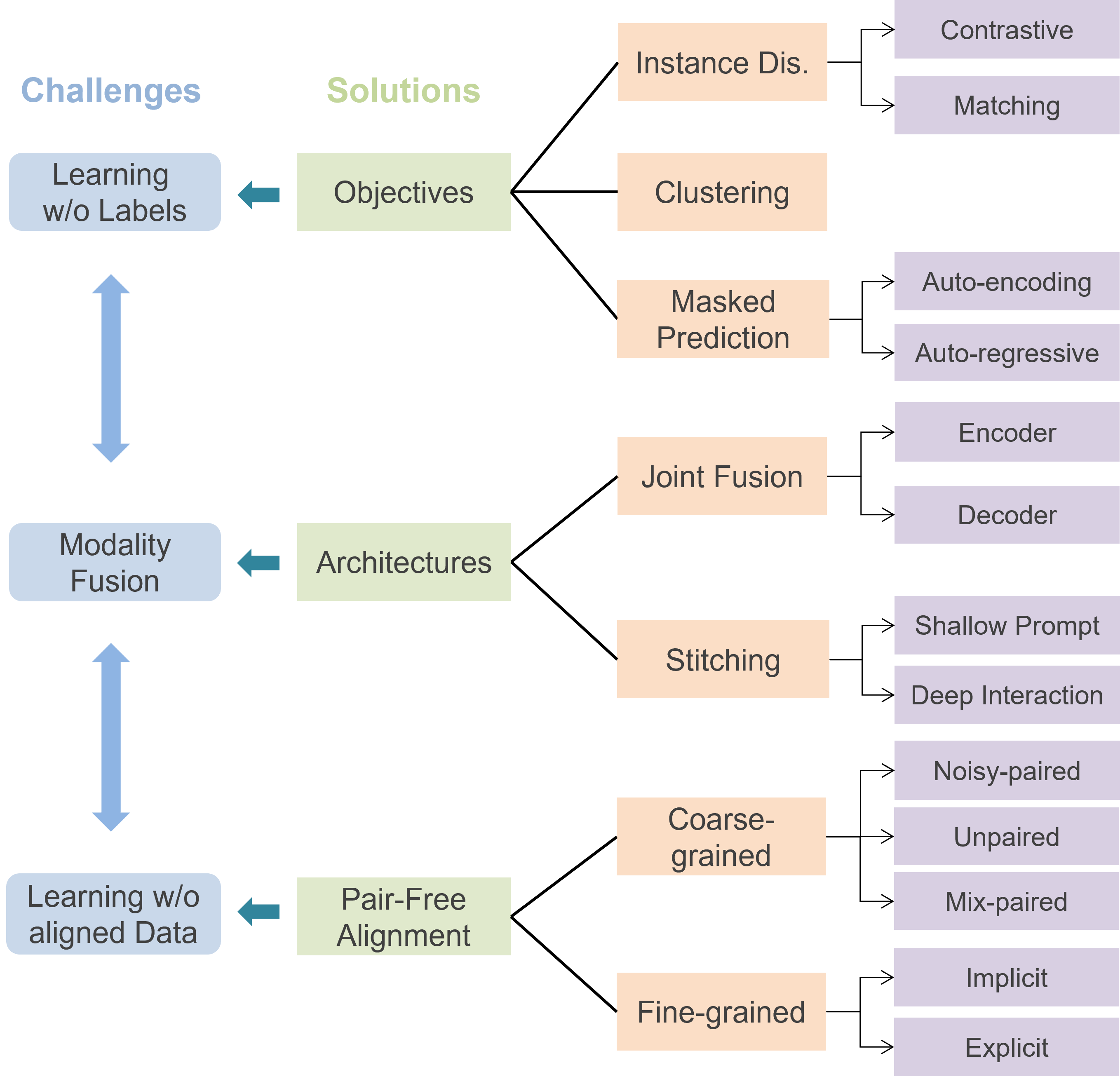

Multimodal learning, which aims to understand and analyze information from multiple modalities, has achieved substantial progress in the supervised regime in recent years. However, the heavy dependence on data paired with expensive human annotations impedes scaling up models. Meanwhile, given the availability of large-scale unannotated data in the wild, self-supervised learning has become an attractive strategy to alleviate the annotation bottleneck. Building on these two directions, self-supervised multimodal learning (SSML) provides ways to learn from raw multimodal data. In this survey, we provide a comprehensive review of the state-of-the-art in SSML, in which we elucidate three major challenges intrinsic to self-supervised learning with multimodal data: (1) learning representations from multimodal data without labels, (2) fusion of different modalities, and (3) learning with unaligned data. We then detail existing solutions to these challenges. Specifically, we consider (1) objectives for learning from multimodal unlabeled data via self-supervision, (2) model architectures from the perspective of different multimodal fusion strategies, and (3) pair-free learning strategies for coarse-grained and fine-grained alignment. We also review real-world applications of SSML algorithms in diverse fields such as healthcare, remote sensing, and machine translation. Finally, we discuss challenges and future directions for SSML. A collection of related resources can be found at: https://github.com/ys-zong/awesome-self-supervised-multimodal-learning.

PDF Abstract

MS COCO

MS COCO

Visual Question Answering

Visual Question Answering