Segment Any Anomaly without Training via Hybrid Prompt Regularization

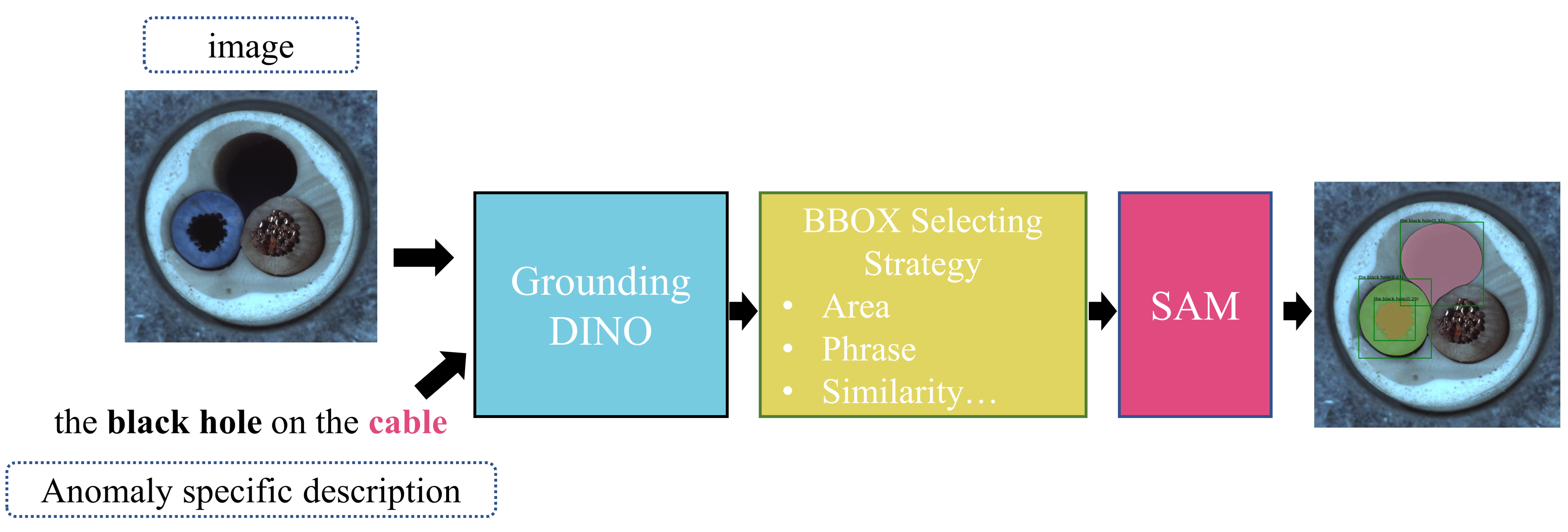

We present a novel framework, i.e., Segment Any Anomaly + (SAA+), for zero-shot anomaly segmentation with hybrid prompt regularization to improve the adaptability of modern foundation models. Existing anomaly segmentation models typically rely on domain-specific fine-tuning, limiting their generalization across countless anomaly patterns. In this work, inspired by the great zero-shot generalization ability of foundation models like Segment Anything, we first explore their assembly to leverage diverse multi-modal prior knowledge for anomaly localization. For non-parameter foundation model adaptation to anomaly segmentation, we further introduce hybrid prompts derived from domain expert knowledge and target image context as regularization. Our proposed SAA+ model achieves state-of-the-art performance on several anomaly segmentation benchmarks, including VisA, MVTec-AD, MTD, and KSDD2, in the zero-shot setting. We will release the code at \href{https://github.com/caoyunkang/Segment-Any-Anomaly}{https://github.com/caoyunkang/Segment-Any-Anomaly}.

PDF Abstract

MVTecAD

MVTecAD

VisA

VisA