Riemannian stochastic variance reduced gradient algorithm with retraction and vector transport

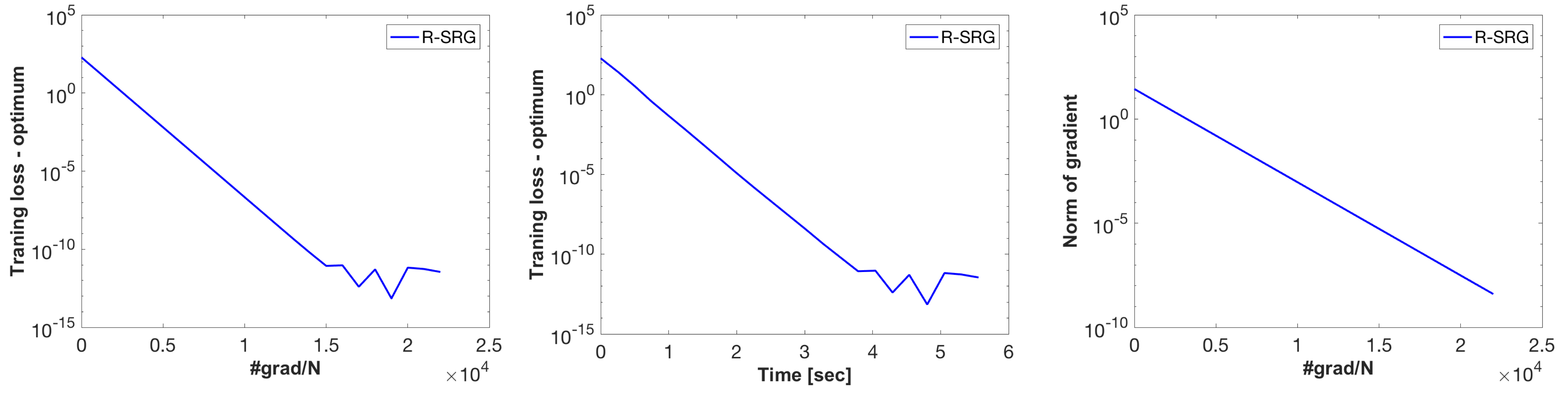

In recent years, stochastic variance reduction algorithms have attracted considerable attention for minimizing the average of a large but finite number of loss functions. This paper proposes a novel Riemannian extension of the Euclidean stochastic variance reduced gradient (R-SVRG) algorithm to a manifold search space. The key challenges of averaging, adding, and subtracting multiple gradients are addressed with retraction and vector transport. For the proposed algorithm, we present a global convergence analysis with a decaying step size as well as a local convergence rate analysis with a fixed step size under some natural assumptions. In addition, the proposed algorithm is applied to the computation problem of the Riemannian centroid on the symmetric positive definite (SPD) manifold as well as the principal component analysis and low-rank matrix completion problems on the Grassmann manifold. The results show that the proposed algorithm outperforms the standard Riemannian stochastic gradient descent algorithm in each case.

PDF Abstract