PromptKD: Unsupervised Prompt Distillation for Vision-Language Models

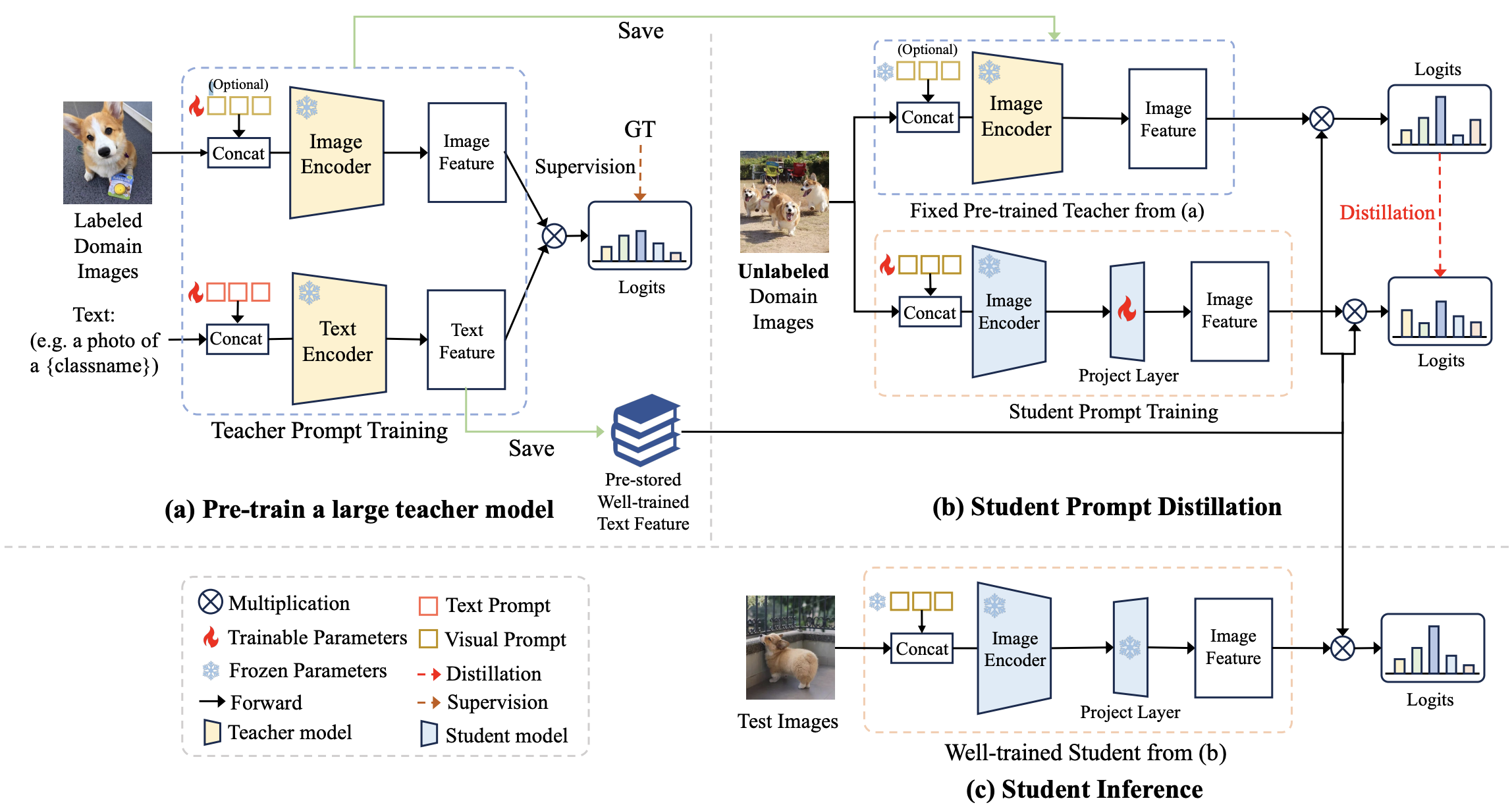

Prompt learning has emerged as a valuable technique in enhancing vision-language models (VLMs) such as CLIP for downstream tasks in specific domains. Existing work mainly focuses on designing various learning forms of prompts, neglecting the potential of prompts as effective distillers for learning from larger teacher models. In this paper, we introduce an unsupervised domain prompt distillation framework, which aims to transfer the knowledge of a larger teacher model to a lightweight target model through prompt-driven imitation using unlabeled domain images. Specifically, our framework consists of two distinct stages. In the initial stage, we pre-train a large CLIP teacher model using domain (few-shot) labels. After pre-training, we leverage the unique decoupled-modality characteristics of CLIP by pre-computing and storing the text features as class vectors only once through the teacher text encoder. In the subsequent stage, the stored class vectors are shared across teacher and student image encoders for calculating the predicted logits. Further, we align the logits of both the teacher and student models via KL divergence, encouraging the student image encoder to generate similar probability distributions to the teacher through the learnable prompts. The proposed prompt distillation process eliminates the reliance on labeled data, enabling the algorithm to leverage a vast amount of unlabeled images within the domain. Finally, the well-trained student image encoders and pre-stored text features (class vectors) are utilized for inference. To our best knowledge, we are the first to (1) perform unsupervised domain-specific prompt-driven knowledge distillation for CLIP, and (2) establish a practical pre-storing mechanism of text features as shared class vectors between teacher and student. Extensive experiments on 11 datasets demonstrate the effectiveness of our method.

PDF AbstractCode

Results from the Paper

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Benchmark |

|---|---|---|---|---|---|---|

| Prompt Engineering | Caltech-101 | PromptKD | Harmonic mean | 97.77 | # 1 | |

| Prompt Engineering | DTD | PromptKD | Harmonic mean | 77.94 | # 1 | |

| Prompt Engineering | EuroSAT | PromptKD | Harmonic mean | 89.14 | # 1 | |

| Prompt Engineering | FGVC-Aircraft | PromptKD | Harmonic mean | 45.17 | # 1 | |

| Prompt Engineering | Food-101 | PromptKD | Harmonic mean | 93.05 | # 1 | |

| Prompt Engineering | ImageNet | PromptKD | Harmonic mean | 77.62 | # 1 | |

| Prompt Engineering | Oxford 102 Flower | PromptKD | Harmonic mean | 90.24 | # 1 | |

| Prompt Engineering | Oxford-IIIT Pet Dataset | PromptKD | Harmonic mean | 97.15 | # 1 | |

| Prompt Engineering | Stanford Cars | PromptKD | Harmonic mean | 83.13 | # 1 | |

| Prompt Engineering | SUN397 | PromptKD | Harmonic mean | 82.60 | # 1 | |

| Prompt Engineering | UCF101 | PromptKD | Harmonic mean | 86.10 | # 1 |

ImageNet

ImageNet

UCF101

UCF101

Oxford 102 Flower

Oxford 102 Flower

Stanford Cars

Stanford Cars

DTD

DTD

Food-101

Food-101

Caltech-101

Caltech-101

EuroSAT

EuroSAT

FGVC-Aircraft

FGVC-Aircraft

Oxford-IIIT Pet Dataset

Oxford-IIIT Pet Dataset

SUN397

SUN397