Individual Privacy Accounting for Differentially Private Stochastic Gradient Descent

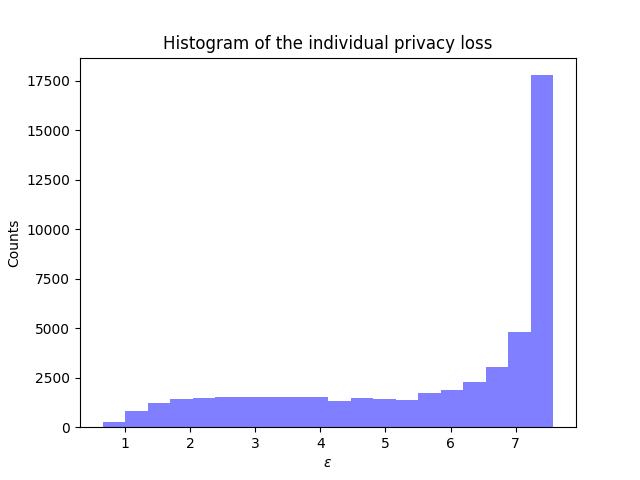

Differentially private stochastic gradient descent (DP-SGD) is the workhorse algorithm for recent advances in private deep learning. It provides a single privacy guarantee to all datapoints in the dataset. We propose output-specific $(\varepsilon,\delta)$-DP to characterize privacy guarantees for individual examples when releasing models trained by DP-SGD. We also design an efficient algorithm to investigate individual privacy across a number of datasets. We find that most examples enjoy stronger privacy guarantees than the worst-case bound. We further discover that the training loss and the privacy parameter of an example are well-correlated. This implies groups that are underserved in terms of model utility simultaneously experience weaker privacy guarantees. For example, on CIFAR-10, the average $\varepsilon$ of the class with the lowest test accuracy is 44.2\% higher than that of the class with the highest accuracy.

PDF Abstract

CIFAR-10

CIFAR-10

MNIST

MNIST

UTKFace

UTKFace