GuessWhat?! Visual object discovery through multi-modal dialogue

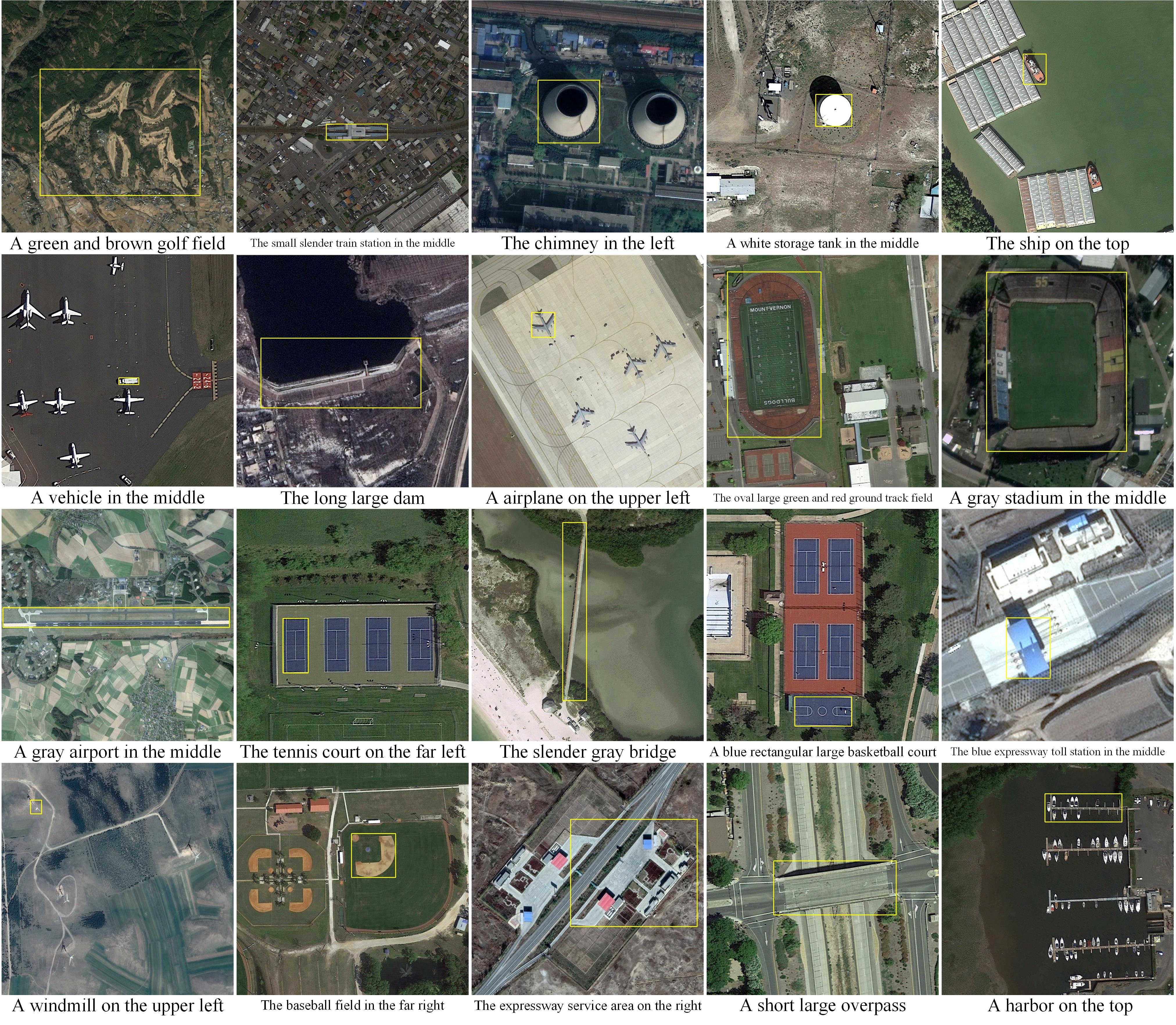

We introduce GuessWhat?!, a two-player guessing game as a testbed for research on the interplay of computer vision and dialogue systems. The goal of the game is to locate an unknown object in a rich image scene by asking a sequence of questions. Higher-level image understanding, like spatial reasoning and language grounding, is required to solve the proposed task. Our key contribution is the collection of a large-scale dataset consisting of 150K human-played games with a total of 800K visual question-answer pairs on 66K images. We explain our design decisions in collecting the dataset and introduce the oracle and questioner tasks that are associated with the two players of the game. We prototyped deep learning models to establish initial baselines of the introduced tasks.

PDF Abstract CVPR 2017 PDF CVPR 2017 AbstractCode

Tasks

Datasets

Introduced in the Paper:

GuessWhat?!

GuessWhat?!

Used in the Paper:

MS COCO

MS COCO

Visual Question Answering

Visual Question Answering

ReferItGame

ReferItGame