Graph Inference Acceleration by Learning MLPs on Graphs without Supervision

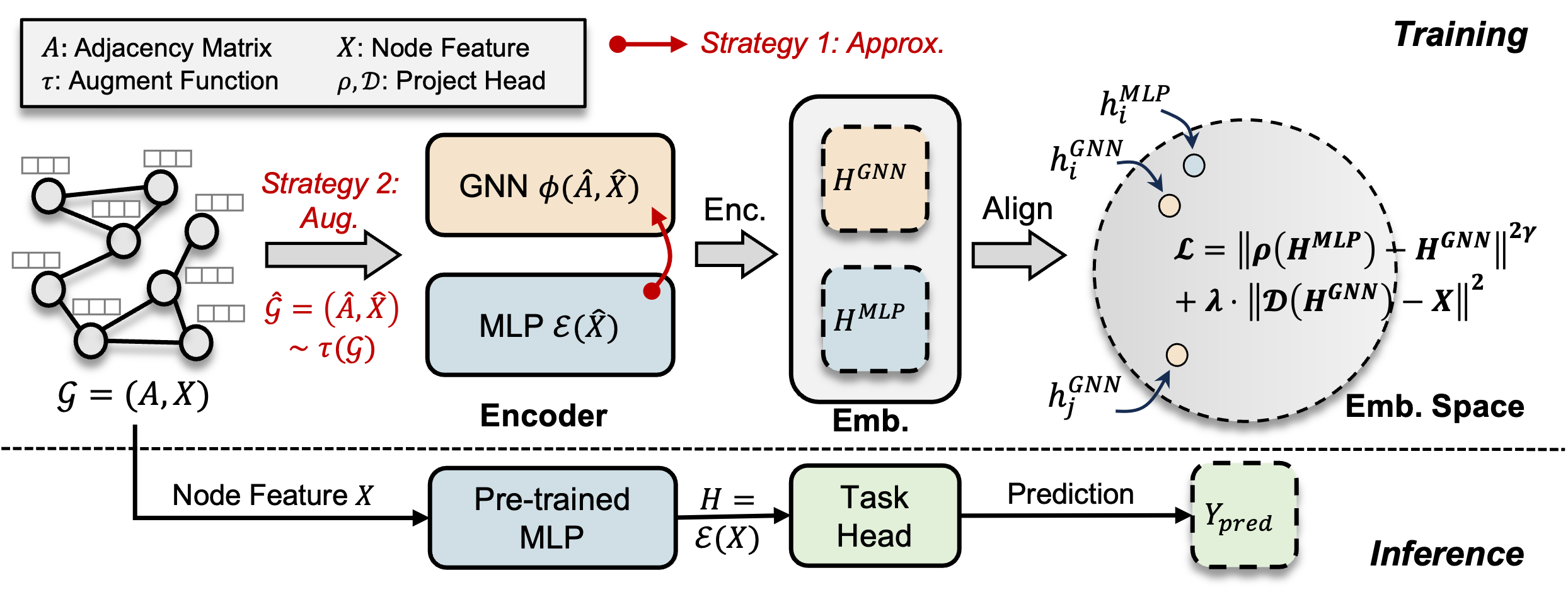

Graph Neural Networks (GNNs) have demonstrated effectiveness in various graph learning tasks, yet their reliance on message-passing constraints their deployment in latency-sensitive applications such as financial fraud detection. Recent works have explored distilling knowledge from GNNs to Multi-Layer Perceptrons (MLPs) to accelerate inference. However, this task-specific supervised distillation limits generalization to unseen nodes, which are prevalent in latency-sensitive applications. To this end, we present \textbf{\textsc{SimMLP}}, a \textbf{\textsc{Sim}}ple yet effective framework for learning \textbf{\textsc{MLP}}s on graphs without supervision, to enhance generalization. \textsc{SimMLP} employs self-supervised alignment between GNNs and MLPs to capture the fine-grained and generalizable correlation between node features and graph structures, and proposes two strategies to alleviate the risk of trivial solutions. Theoretically, we comprehensively analyze \textsc{SimMLP} to demonstrate its equivalence to GNNs in the optimal case and its generalization capability. Empirically, \textsc{SimMLP} outperforms state-of-the-art baselines, especially in settings with unseen nodes. In particular, it obtains significant performance gains {\bf (7$\sim$26\%)} over MLPs and inference acceleration over GNNs {\bf (90$\sim$126$\times$)} on large-scale graph datasets. Our codes are available at: \url{https://github.com/Zehong-Wang/SimMLP}.

PDF Abstract

Wiki-CS

Wiki-CS