Forgedit: Text Guided Image Editing via Learning and Forgetting

Text-guided image editing on real or synthetic images, given only the original image itself and the target text prompt as inputs, is a very general and challenging task. It requires an editing model to estimate by itself which part of the image should be edited, and then perform either rigid or non-rigid editing while preserving the characteristics of original image. In this paper, we design a novel text-guided image editing method, named as Forgedit. First, we propose a vision-language joint optimization framework capable of reconstructing the original image in 30 seconds, much faster than previous SOTA and much less overfitting. Then we propose a novel vector projection mechanism in text embedding space of Diffusion Models, which is capable to control the identity similarity and editing strength seperately. Finally, we discovered a general property of UNet in Diffusion Models, i.e., Unet encoder learns space and structure, Unet decoder learns appearance and identity. With such a property, we design forgetting mechanisms to successfully tackle the fatal and inevitable overfitting issues when fine-tuning Diffusion Models on one image, thus significantly boosting the editing capability of Diffusion Models. Our method, Forgedit, built on Stable Diffusion, achieves new state-of-the-art results on the challenging text-guided image editing benchmark: TEdBench, surpassing the previous SOTA methods such as Imagic with Imagen, in terms of both CLIP score and LPIPS score. Codes are available at https://github.com/witcherofresearch/Forgedit

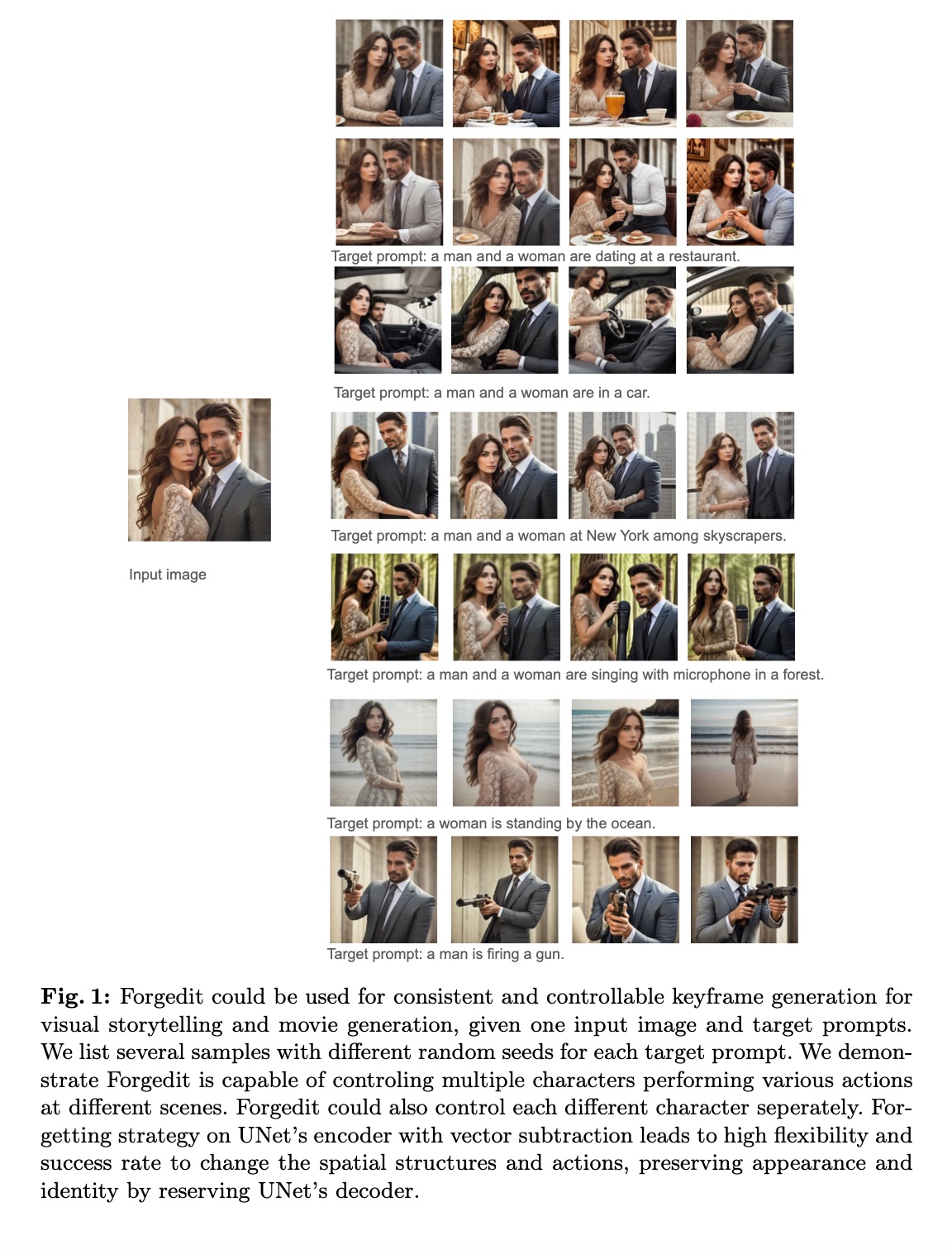

PDF Abstract