Exploring Iterative Refinement with Diffusion Models for Video Grounding

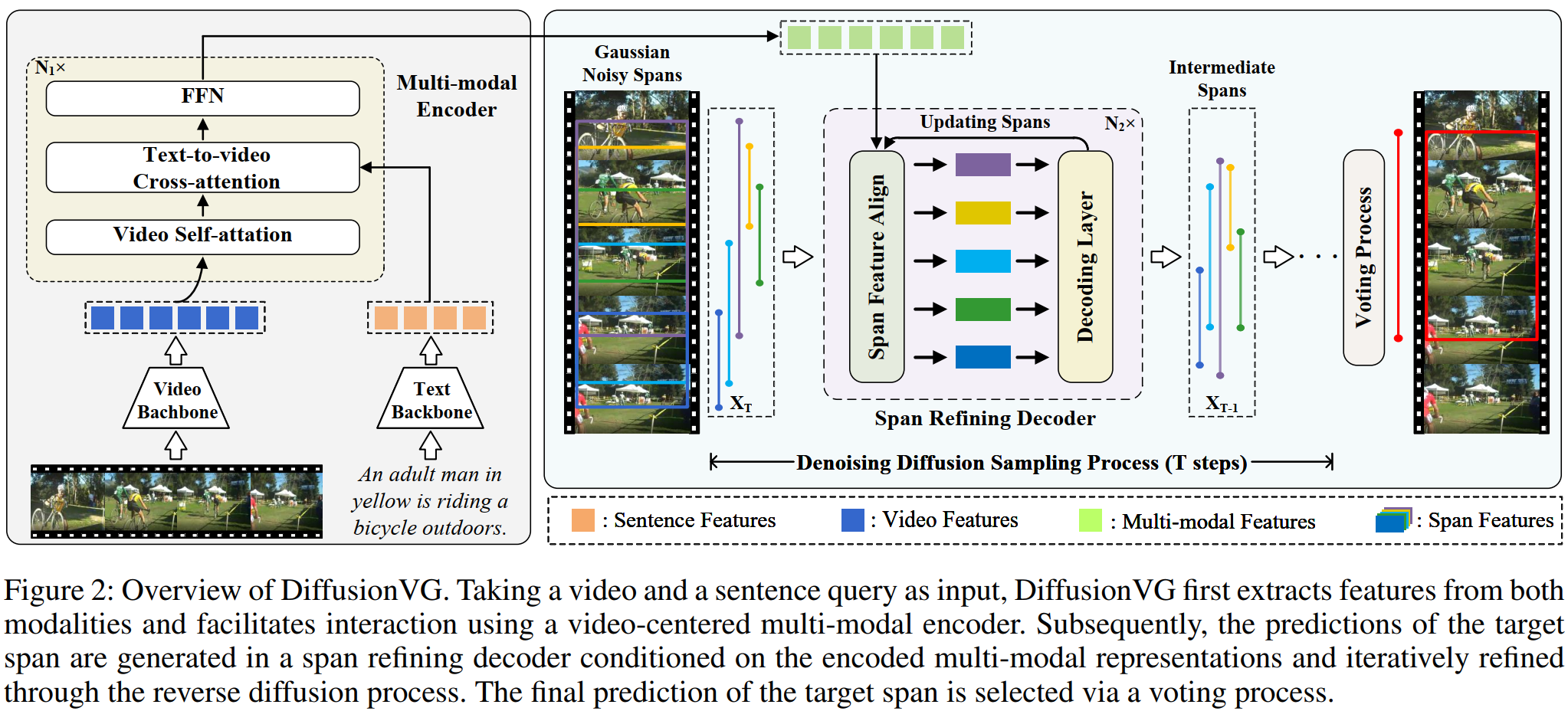

Video grounding aims to localize the target moment in an untrimmed video corresponding to a given sentence query. Existing methods typically select the best prediction from a set of predefined proposals or directly regress the target span in a single-shot manner, resulting in the absence of a systematical prediction refinement process. In this paper, we propose DiffusionVG, a novel framework with diffusion models that formulates video grounding as a conditional generation task, where the target span is generated from Gaussian noise inputs and interatively refined in the reverse diffusion process. During training, DiffusionVG progressively adds noise to the target span with a fixed forward diffusion process and learns to recover the target span in the reverse diffusion process. In inference, DiffusionVG can generate the target span from Gaussian noise inputs by the learned reverse diffusion process conditioned on the video-sentence representations. Without bells and whistles, our DiffusionVG demonstrates superior performance compared to existing well-crafted models on mainstream Charades-STA, ActivityNet Captions and TACoS benchmarks.

PDF Abstract

ActivityNet Captions

ActivityNet Captions

Charades-STA

Charades-STA