Explainable Deep One-Class Classification

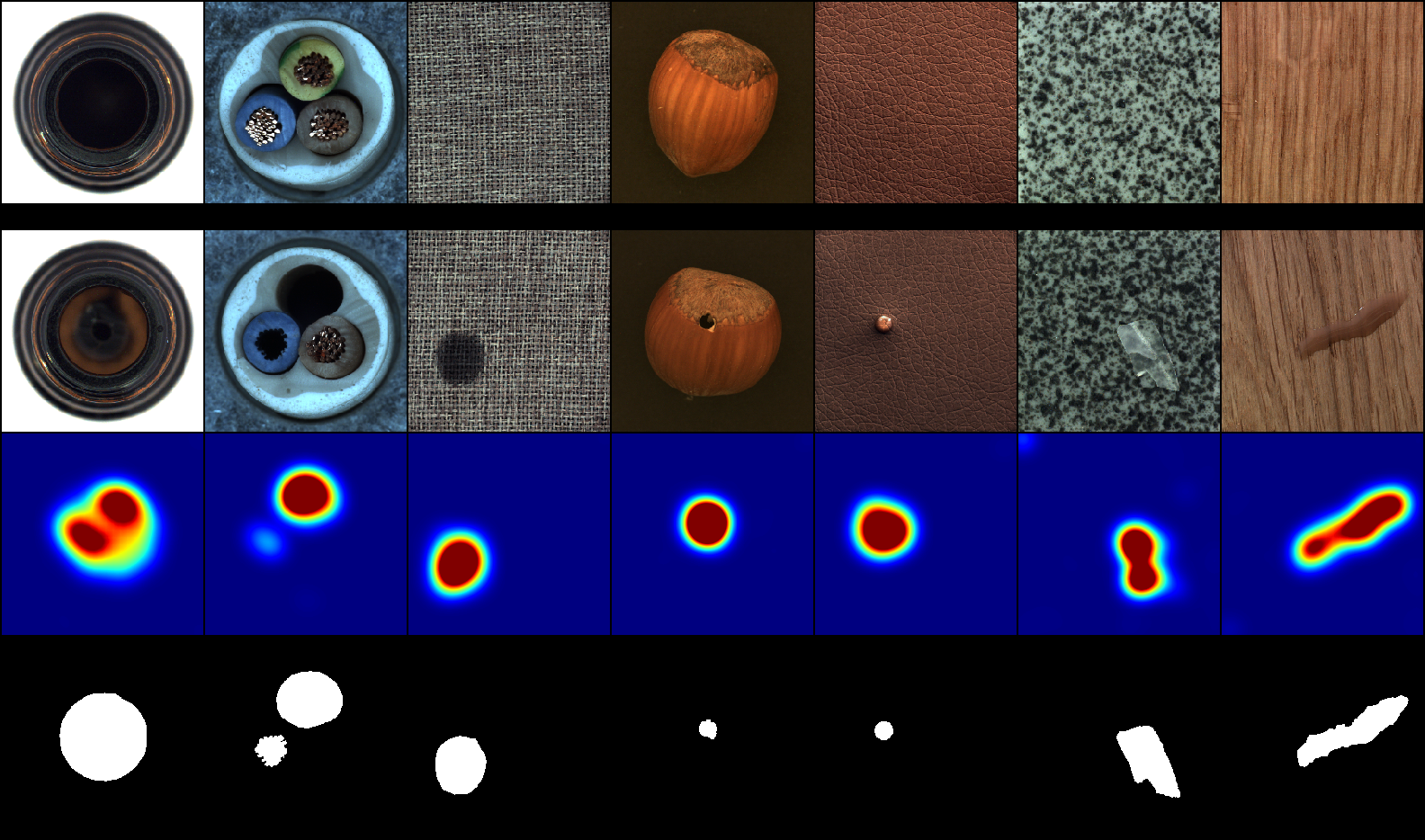

Deep one-class classification variants for anomaly detection learn a mapping that concentrates nominal samples in feature space causing anomalies to be mapped away. Because this transformation is highly non-linear, finding interpretations poses a significant challenge. In this paper we present an explainable deep one-class classification method, Fully Convolutional Data Description (FCDD), where the mapped samples are themselves also an explanation heatmap. FCDD yields competitive detection performance and provides reasonable explanations on common anomaly detection benchmarks with CIFAR-10 and ImageNet. On MVTec-AD, a recent manufacturing dataset offering ground-truth anomaly maps, FCDD sets a new state of the art in the unsupervised setting. Our method can incorporate ground-truth anomaly maps during training and using even a few of these (~5) improves performance significantly. Finally, using FCDD's explanations we demonstrate the vulnerability of deep one-class classification models to spurious image features such as image watermarks.

PDF Abstract ICLR 2021 PDF ICLR 2021 AbstractCode

Results from the Paper

Ranked #5 on

Anomaly Detection

on One-class ImageNet-30

(using extra training data)

Ranked #5 on

Anomaly Detection

on One-class ImageNet-30

(using extra training data)

CIFAR-10

CIFAR-10

ImageNet

ImageNet

CIFAR-100

CIFAR-100

MNIST

MNIST

Fashion-MNIST

Fashion-MNIST

MVTecAD

MVTecAD