Evaluating Language Models for Mathematics through Interactions

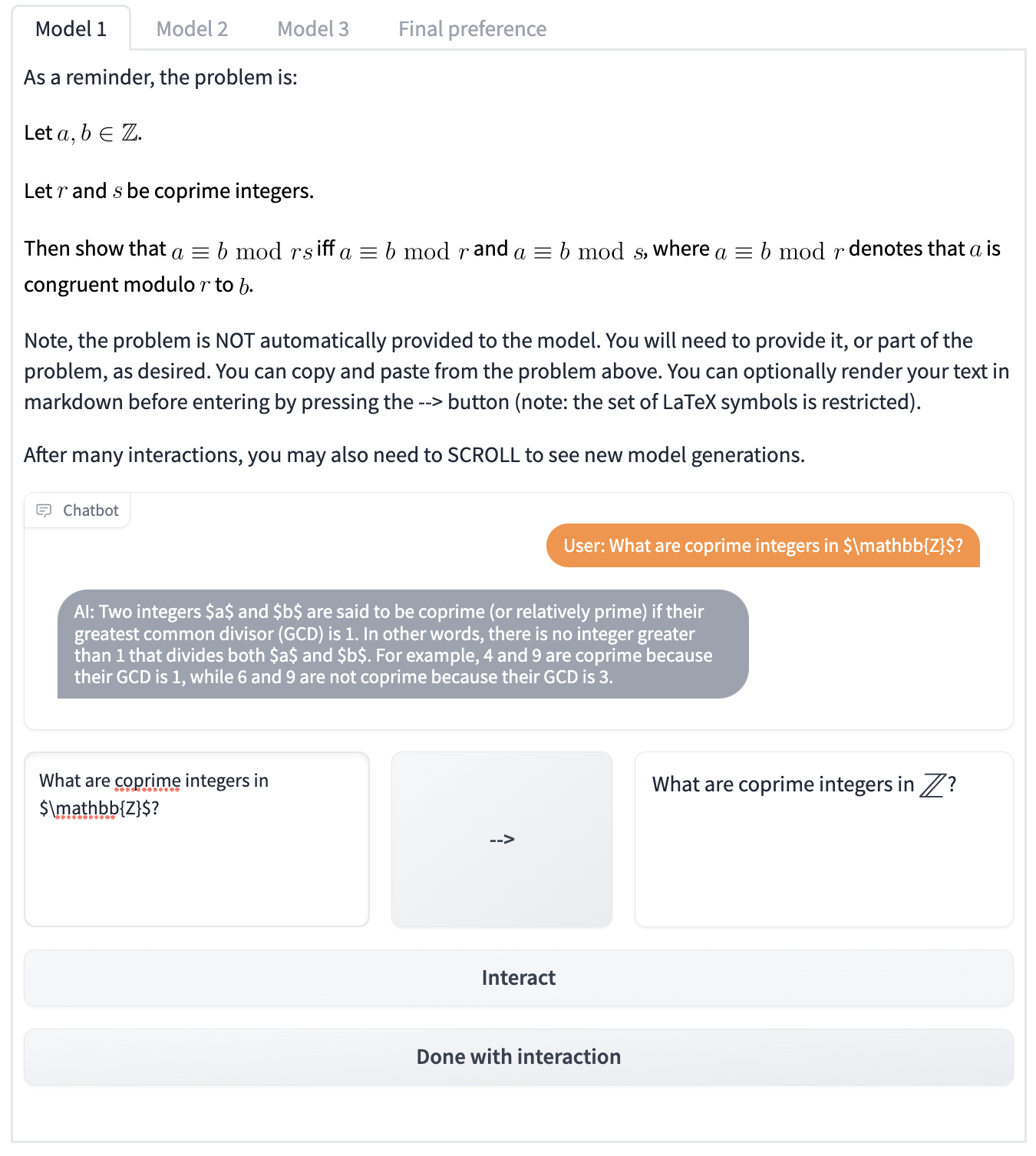

There is much excitement about the opportunity to harness the power of large language models (LLMs) when building problem-solving assistants. However, the standard methodology of evaluating LLMs relies on static pairs of inputs and outputs, and is insufficient for making an informed decision about which LLMs and under which assistive settings can they be sensibly used. Static assessment fails to account for the essential interactive element in LLM deployment, and therefore limits how we understand language model capabilities. We introduce CheckMate, an adaptable prototype platform for humans to interact with and evaluate LLMs. We conduct a study with CheckMate to evaluate three language models (InstructGPT, ChatGPT, and GPT-4) as assistants in proving undergraduate-level mathematics, with a mixed cohort of participants from undergraduate students to professors of mathematics. We release the resulting interaction and rating dataset, MathConverse. By analysing MathConverse, we derive a taxonomy of human behaviours and uncover that despite a generally positive correlation, there are notable instances of divergence between correctness and perceived helpfulness in LLM generations, amongst other findings. Further, we garner a more granular understanding of GPT-4 mathematical problem-solving through a series of case studies, contributed by expert mathematicians. We conclude with actionable takeaways for ML practitioners and mathematicians: models that communicate uncertainty respond well to user corrections, and are more interpretable and concise may constitute better assistants. Interactive evaluation is a promising way to navigate the capability of these models; humans should be aware of language models' algebraic fallibility and discern where they are appropriate to use.

PDF Abstract