Effective Approaches to Attention-based Neural Machine Translation

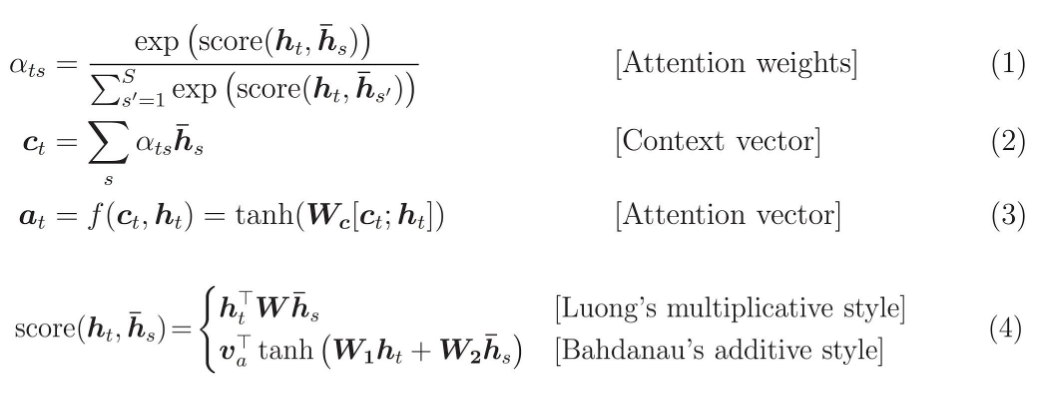

An attentional mechanism has lately been used to improve neural machine translation (NMT) by selectively focusing on parts of the source sentence during translation. However, there has been little work exploring useful architectures for attention-based NMT. This paper examines two simple and effective classes of attentional mechanism: a global approach which always attends to all source words and a local one that only looks at a subset of source words at a time. We demonstrate the effectiveness of both approaches over the WMT translation tasks between English and German in both directions. With local attention, we achieve a significant gain of 5.0 BLEU points over non-attentional systems which already incorporate known techniques such as dropout. Our ensemble model using different attention architectures has established a new state-of-the-art result in the WMT'15 English to German translation task with 25.9 BLEU points, an improvement of 1.0 BLEU points over the existing best system backed by NMT and an n-gram reranker.

PDF Abstract EMNLP 2015 PDF EMNLP 2015 AbstractCode

Results from the Paper

Ranked #1 on

Machine Translation

on 20NEWS

(Accuracy metric)

Ranked #1 on

Machine Translation

on 20NEWS

(Accuracy metric)

WMT 2014

WMT 2014