ConvNet vs Transformer, Supervised vs CLIP: Beyond ImageNet Accuracy

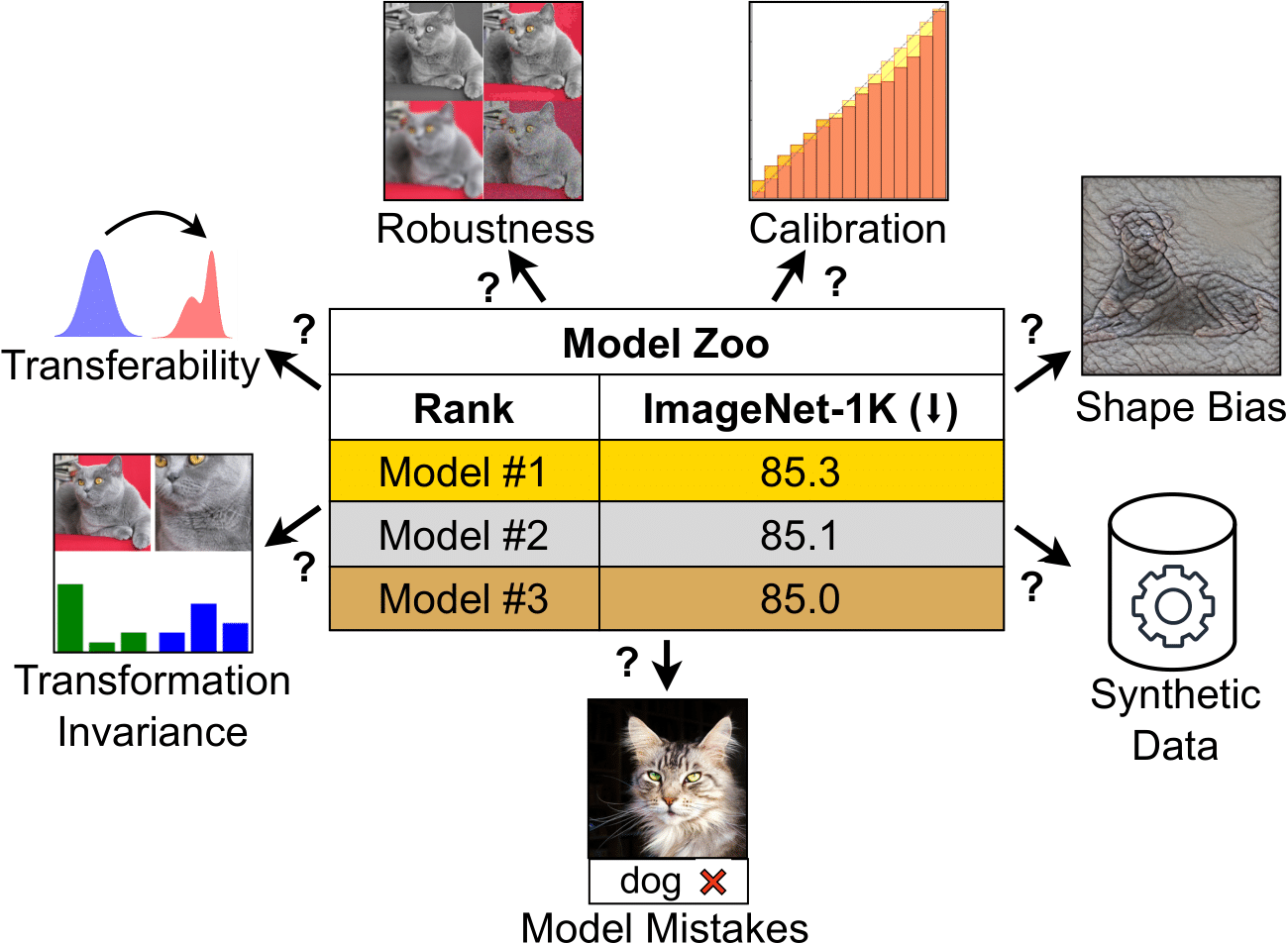

Modern computer vision offers a great variety of models to practitioners, and selecting a model from multiple options for specific applications can be challenging. Conventionally, competing model architectures and training protocols are compared by their classification accuracy on ImageNet. However, this single metric does not fully capture performance nuances critical for specialized tasks. In this work, we conduct an in-depth comparative analysis of model behaviors beyond ImageNet accuracy, for both ConvNet and Vision Transformer architectures, each across supervised and CLIP training paradigms. Although our selected models have similar ImageNet accuracies and compute requirements, we find that they differ in many other aspects: types of mistakes, output calibration, transferability, and feature invariance, among others. This diversity in model characteristics, not captured by traditional metrics, highlights the need for more nuanced analysis when choosing among different models. Our code is available at https://github.com/kirill-vish/Beyond-INet.

PDF Abstract

ImageNet

ImageNet

CIFAR-100

CIFAR-100

SVHN

SVHN

Oxford 102 Flower

Oxford 102 Flower

DTD

DTD

Caltech-101

Caltech-101

CLEVR

CLEVR

ImageNet-C

ImageNet-C

ImageNet-R

ImageNet-R

ImageNet-A

ImageNet-A

ImageNet-Sketch

ImageNet-Sketch

dSprites

dSprites

SUN397

SUN397

ImageNet-X

ImageNet-X

ImageNet-Hard

ImageNet-Hard