Balancing Biases and Preserving Privacy on Balanced Faces in the Wild

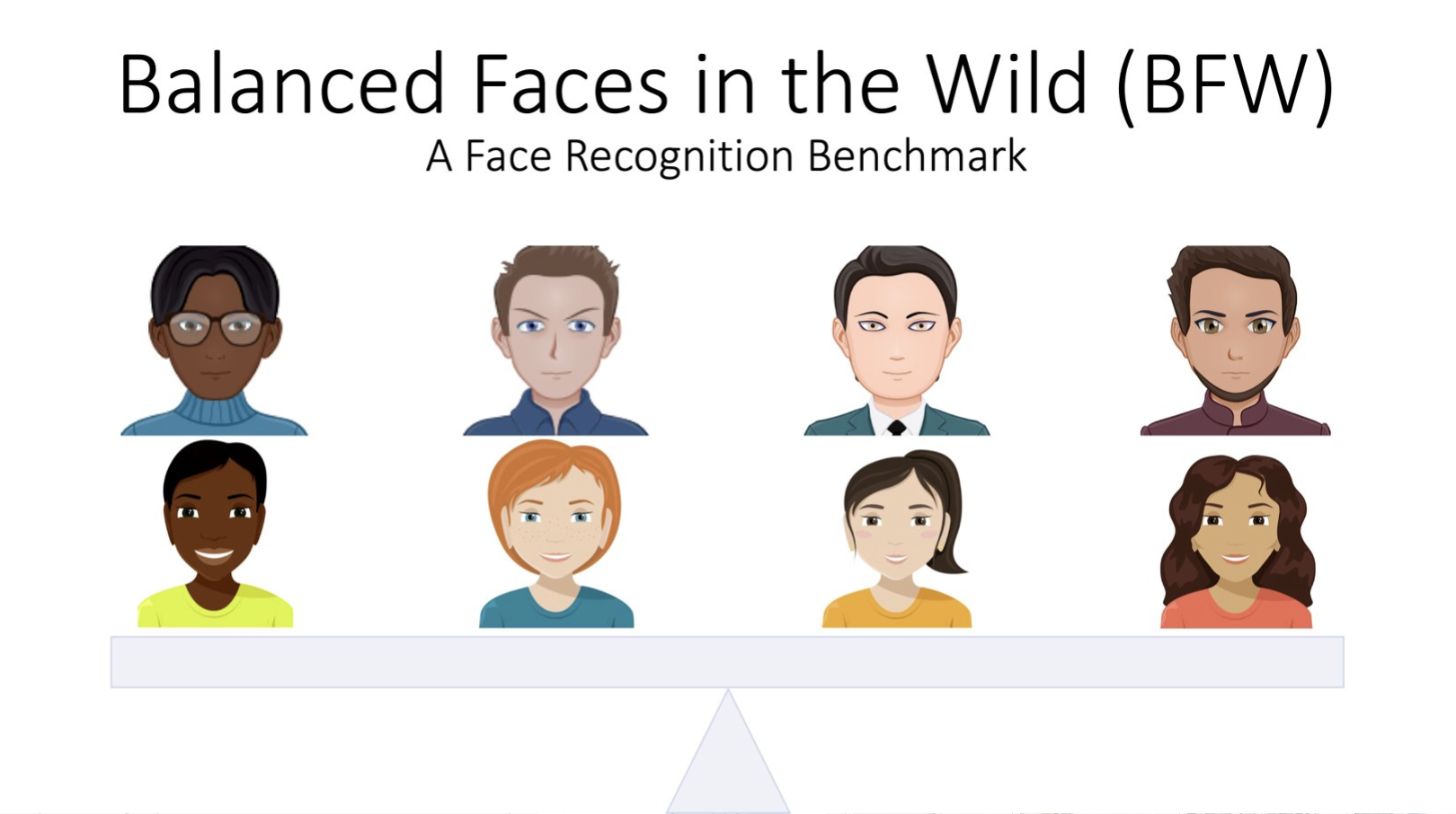

There are demographic biases present in current facial recognition (FR) models. To measure these biases across different ethnic and gender subgroups, we introduce our Balanced Faces in the Wild (BFW) dataset. This dataset allows for the characterization of FR performance per subgroup. We found that relying on a single score threshold to differentiate between genuine and imposters sample pairs leads to suboptimal results. Additionally, performance within subgroups often varies significantly from the global average. Therefore, specific error rates only hold for populations that match the validation data. To mitigate imbalanced performances, we propose a novel domain adaptation learning scheme that uses facial features extracted from state-of-the-art neural networks. This scheme boosts the average performance and preserves identity information while removing demographic knowledge. Removing demographic knowledge prevents potential biases from affecting decision-making and protects privacy by eliminating demographic information. We explore the proposed method and demonstrate that subgroup classifiers can no longer learn from features projected using our domain adaptation scheme. For access to the source code and data, please visit https://github.com/visionjo/facerec-bias-bfw.

PDF Abstract

LFW

LFW

CASIA-WebFace

CASIA-WebFace

DemogPairs

DemogPairs