AttentionGAN: Unpaired Image-to-Image Translation using Attention-Guided Generative Adversarial Networks

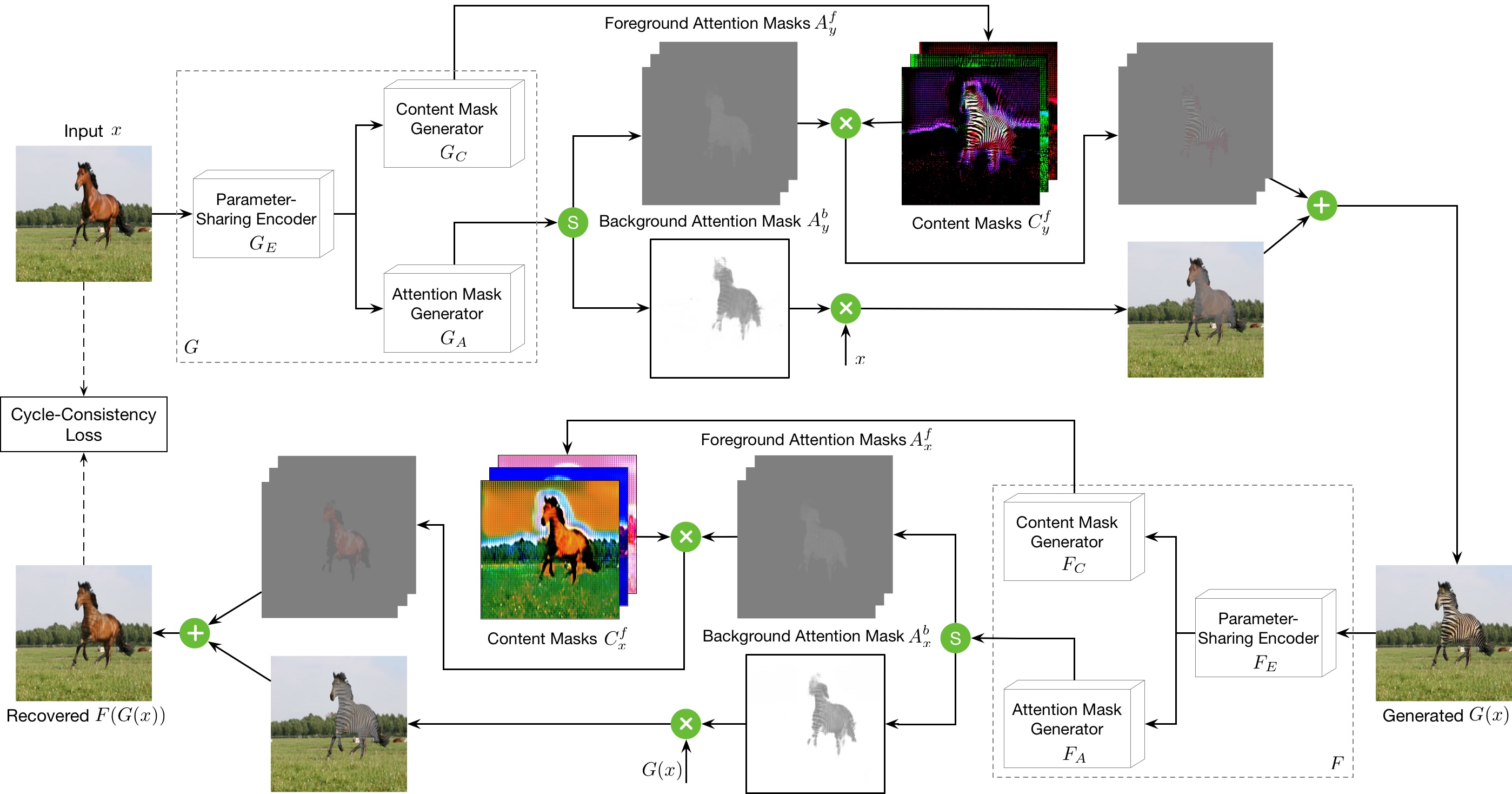

State-of-the-art methods in image-to-image translation are capable of learning a mapping from a source domain to a target domain with unpaired image data. Though the existing methods have achieved promising results, they still produce visual artifacts, being able to translate low-level information but not high-level semantics of input images. One possible reason is that generators do not have the ability to perceive the most discriminative parts between the source and target domains, thus making the generated images low quality. In this paper, we propose a new Attention-Guided Generative Adversarial Networks (AttentionGAN) for the unpaired image-to-image translation task. AttentionGAN can identify the most discriminative foreground objects and minimize the change of the background. The attention-guided generators in AttentionGAN are able to produce attention masks, and then fuse the generation output with the attention masks to obtain high-quality target images. Accordingly, we also design a novel attention-guided discriminator which only considers attended regions. Extensive experiments are conducted on several generative tasks with eight public datasets, demonstrating that the proposed method is effective to generate sharper and more realistic images compared with existing competitive models. The code is available at https://github.com/Ha0Tang/AttentionGAN.

PDF Abstract

CelebA

CelebA

RaFD

RaFD

selfie2anime

selfie2anime