Adapting Segment Anything Model for Change Detection in HR Remote Sensing Images

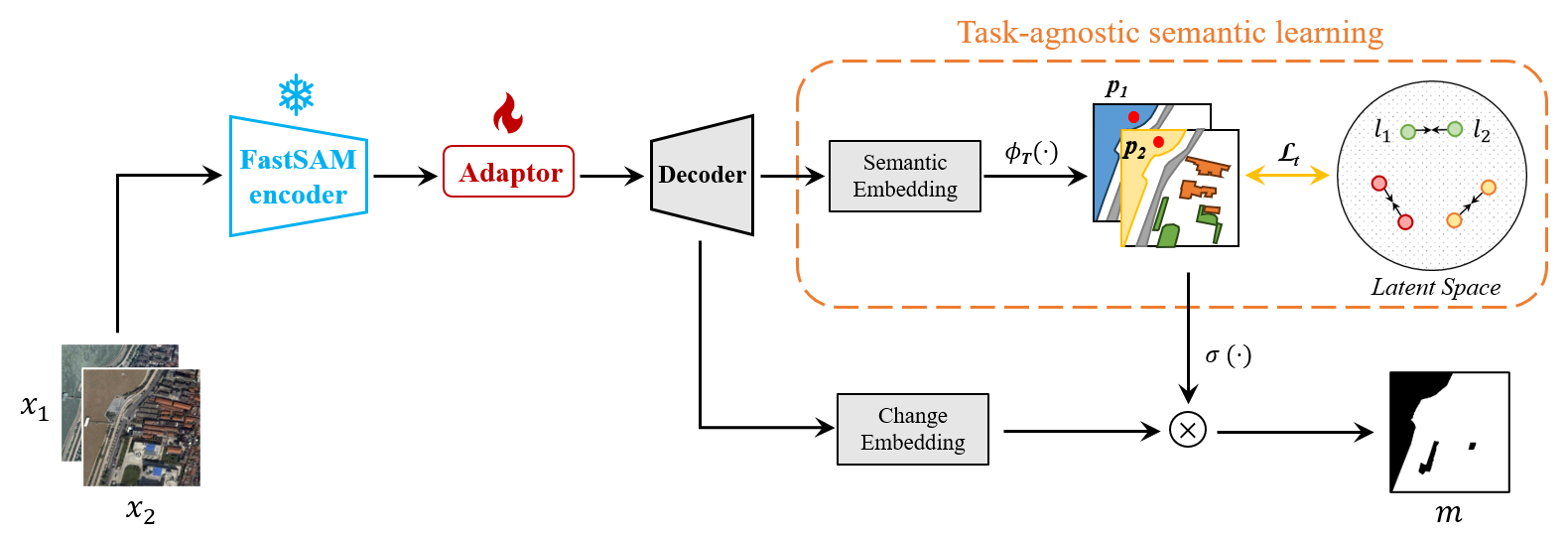

Vision Foundation Models (VFMs) such as the Segment Anything Model (SAM) allow zero-shot or interactive segmentation of visual contents, thus they are quickly applied in a variety of visual scenes. However, their direct use in many Remote Sensing (RS) applications is often unsatisfactory due to the special imaging characteristics of RS images. In this work, we aim to utilize the strong visual recognition capabilities of VFMs to improve the change detection of high-resolution Remote Sensing Images (RSIs). We employ the visual encoder of FastSAM, an efficient variant of the SAM, to extract visual representations in RS scenes. To adapt FastSAM to focus on some specific ground objects in the RS scenes, we propose a convolutional adaptor to aggregate the task-oriented change information. Moreover, to utilize the semantic representations that are inherent to SAM features, we introduce a task-agnostic semantic learning branch to model the semantic latent in bi-temporal RSIs. The resulting method, SAMCD, obtains superior accuracy compared to the SOTA methods and exhibits a sample-efficient learning ability that is comparable to semi-supervised CD methods. To the best of our knowledge, this is the first work that adapts VFMs for the CD of HR RSIs.

PDF Abstract

S2Looking

S2Looking