Search Results for author: Miki Tanaka

Found 7 papers, 0 papers with code

Enhanced Security against Adversarial Examples Using a Random Ensemble of Encrypted Vision Transformer Models

no code implementations • 26 Jul 2023 • Ryota Iijima, Miki Tanaka, Sayaka Shiota, Hitoshi Kiya

In previous studies, it was confirmed that the vision transformer (ViT) is more robust against the property of adversarial transferability than convolutional neural network (CNN) models such as ConvMixer, and moreover encrypted ViT is more robust than ViT without any encryption.

On the Adversarial Transferability of ConvMixer Models

no code implementations • 19 Sep 2022 • Ryota Iijima, Miki Tanaka, Isao Echizen, Hitoshi Kiya

Deep neural networks (DNNs) are well known to be vulnerable to adversarial examples (AEs).

On the Transferability of Adversarial Examples between Encrypted Models

no code implementations • 7 Sep 2022 • Miki Tanaka, Isao Echizen, Hitoshi Kiya

Deep neural networks (DNNs) are well known to be vulnerable to adversarial examples (AEs).

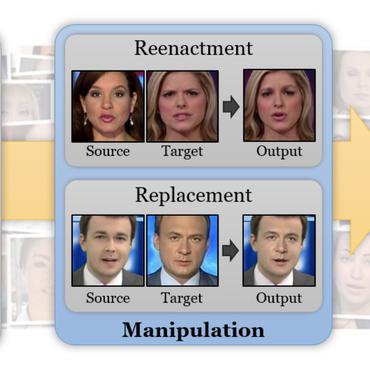

A Detection Method of Temporally Operated Videos Using Robust Hashing

no code implementations • 10 Aug 2022 • Shoko Niwa, Miki Tanaka, Hitoshi Kiya

In addition, videos are temporally operated such as the insertion of new frames and the permutation of frames, of which operations are difficult to be detected by using conventional methods.

A universal detector of CNN-generated images using properties of checkerboard artifacts in the frequency domain

no code implementations • 4 Aug 2021 • Miki Tanaka, Sayaka Shiota, Hitoshi Kiya

In addition, an ensemble of the proposed detector with emphasized spectrums and a conventional detector is proposed to improve the performance of these methods.

Fake-image detection with Robust Hashing

no code implementations • 2 Feb 2021 • Miki Tanaka, Kiya Hitoshi

In this paper, we investigate whether robust hashing has a possibility to robustly detect fake-images even when multiple manipulation techniques such as JPEG compression are applied to images for the first time.

CycleGAN without checkerboard artifacts for counter-forensics of fake-image detection

no code implementations • 1 Dec 2020 • Takayuki Osakabe, Miki Tanaka, Yuma Kinoshita, Hitoshi Kiya

In this paper, we propose a novel CycleGAN without checkerboard artifacts for counter-forensics of fake-image detection.