Search Results for author: Boyuan Jiang

Found 12 papers, 6 papers with code

M2-CLIP: A Multimodal, Multi-task Adapting Framework for Video Action Recognition

no code implementations • 22 Jan 2024 • Mengmeng Wang, Jiazheng Xing, Boyuan Jiang, Jun Chen, Jianbiao Mei, Xingxing Zuo, Guang Dai, Jingdong Wang, Yong liu

In this paper, we introduce a novel Multimodal, Multi-task CLIP adapting framework named \name to address these challenges, preserving both high supervised performance and robust transferability.

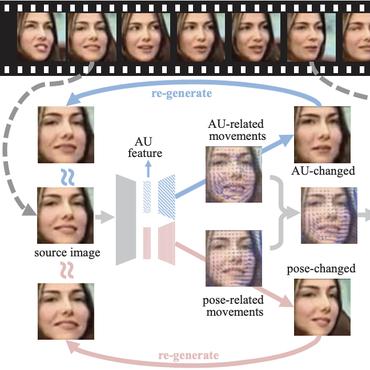

PortraitBooth: A Versatile Portrait Model for Fast Identity-preserved Personalization

no code implementations • 11 Dec 2023 • Xu Peng, Junwei Zhu, Boyuan Jiang, Ying Tai, Donghao Luo, Jiangning Zhang, Wei Lin, Taisong Jin, Chengjie Wang, Rongrong Ji

Moreover, these methods often grapple with identity distortion and limited expression diversity.

Probabilistic Triangulation for Uncalibrated Multi-View 3D Human Pose Estimation

1 code implementation • ICCV 2023 • Boyuan Jiang, Lei Hu, Shihong Xia

The key idea is to use a probability distribution to model the camera pose and iteratively update the distribution from 2D features instead of using camera pose.

Dynamic Frame Interpolation in Wavelet Domain

1 code implementation • 7 Sep 2023 • Lingtong Kong, Boyuan Jiang, Donghao Luo, Wenqing Chu, Ying Tai, Chengjie Wang, Jie Yang

Video frame interpolation is an important low-level vision task, which can increase frame rate for more fluent visual experience.

Pose-aware Attention Network for Flexible Motion Retargeting by Body Part

1 code implementation • 13 Jun 2023 • Lei Hu, Zihao Zhang, Chongyang Zhong, Boyuan Jiang, Shihong Xia

Moreover, we also show that our framework can generate reasonable results even for a more challenging retargeting scenario, like retargeting between bipedal and quadrupedal skeletons because of the body part retargeting strategy and PAN.

IFRNet: Intermediate Feature Refine Network for Efficient Frame Interpolation

2 code implementations • CVPR 2022 • Lingtong Kong, Boyuan Jiang, Donghao Luo, Wenqing Chu, Xiaoming Huang, Ying Tai, Chengjie Wang, Jie Yang

Prevailing video frame interpolation algorithms, that generate the intermediate frames from consecutive inputs, typically rely on complex model architectures with heavy parameters or large delay, hindering them from diverse real-time applications.

Ranked #1 on

Video Frame Interpolation

on Middlebury

Ranked #1 on

Video Frame Interpolation

on Middlebury

Learning Comprehensive Motion Representation for Action Recognition

no code implementations • 23 Mar 2021 • Mingyu Wu, Boyuan Jiang, Donghao Luo, Junchi Yan, Yabiao Wang, Ying Tai, Chengjie Wang, Jilin Li, Feiyue Huang, Xiaokang Yang

For action recognition learning, 2D CNN-based methods are efficient but may yield redundant features due to applying the same 2D convolution kernel to each frame.

Multi-Level Adaptive Region of Interest and Graph Learning for Facial Action Unit Recognition

no code implementations • 24 Feb 2021 • Jingwei Yan, Boyuan Jiang, Jingjing Wang, Qiang Li, Chunmao Wang, ShiLiang Pu

In order to incorporate the intra-level AU relation and inter-level AU regional relevance simultaneously, a multi-level AU relation graph is constructed and graph convolution is performed to further enhance AU regional features of each level.

STM: SpatioTemporal and Motion Encoding for Action Recognition

no code implementations • ICCV 2019 • Boyuan Jiang, Mengmeng Wang, Weihao Gan, Wei Wu, Junjie Yan

Spatiotemporal and motion features are two complementary and crucial information for video action recognition.

Ranked #1 on

Action Recognition In Videos

on HMDB-51

Ranked #1 on

Action Recognition In Videos

on HMDB-51

Selective Transfer with Reinforced Transfer Network for Partial Domain Adaptation

no code implementations • CVPR 2020 • Zhihong Chen, Chao Chen, Zhaowei Cheng, Boyuan Jiang, Ke Fang, Xinyu Jin

However, since the domain shift between source and target domains, only using the deep features for sample selection is defective.

Ranked #6 on

Partial Domain Adaptation

on Office-31

Ranked #6 on

Partial Domain Adaptation

on Office-31

Parameter Transfer Extreme Learning Machine based on Projective Model

1 code implementation • 4 Sep 2018 • Chao Chen, Boyuan Jiang, Xinyu Jin

Unlike the existing parameter transfer approaches, which incorporate the source model information into the target by regularizing the di erence between the source and target domain parameters, an intuitively appealing projective-model is proposed to bridge the source and target model parameters.

Joint Domain Alignment and Discriminative Feature Learning for Unsupervised Deep Domain Adaptation

1 code implementation • 28 Aug 2018 • Chao Chen, Zhihong Chen, Boyuan Jiang, Xinyu Jin

Recently, considerable effort has been devoted to deep domain adaptation in computer vision and machine learning communities.