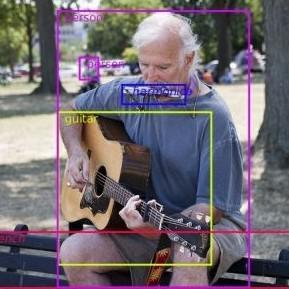

Zero-Shot Object Detection

26 papers with code • 7 benchmarks • 6 datasets

Zero-shot object detection (ZSD) is the task of object detection where no visual training data is available for some of the target object classes.

( Image credit: Zero-Shot Object Detection: Learning to Simultaneously Recognize and Localize Novel Concepts )

Libraries

Use these libraries to find Zero-Shot Object Detection models and implementationsMost implemented papers

ELEVATER: A Benchmark and Toolkit for Evaluating Language-Augmented Visual Models

In general, these language-augmented visual models demonstrate strong transferability to a variety of datasets and tasks.

Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection

To effectively fuse language and vision modalities, we conceptually divide a closed-set detector into three phases and propose a tight fusion solution, which includes a feature enhancer, a language-guided query selection, and a cross-modality decoder for cross-modality fusion.

Zero-Shot Instance Segmentation

We follow this motivation and propose a new task set named zero-shot instance segmentation (ZSI).

Open-vocabulary Object Detection via Vision and Language Knowledge Distillation

On COCO, ViLD outperforms the previous state-of-the-art by 4. 8 on novel AP and 11. 4 on overall AP.

Polarity Loss for Zero-shot Object Detection

This setting gives rise to the need for correct alignment between visual and semantic concepts, so that the unseen objects can be identified using only their semantic attributes.

Learning Open-World Object Proposals without Learning to Classify

In this paper, we identify that the problem is that the binary classifiers in existing proposal methods tend to overfit to the training categories.

Zero-Shot Object Detection by Hybrid Region Embedding

Object detection is considered as one of the most challenging problems in computer vision, since it requires correct prediction of both classes and locations of objects in images.

Synthesizing the Unseen for Zero-shot Object Detection

The existing zero-shot detection approaches project visual features to the semantic domain for seen objects, hoping to map unseen objects to their corresponding semantics during inference.

Grounded Language-Image Pre-training

The unification brings two benefits: 1) it allows GLIP to learn from both detection and grounding data to improve both tasks and bootstrap a good grounding model; 2) GLIP can leverage massive image-text pairs by generating grounding boxes in a self-training fashion, making the learned representation semantic-rich.

Efficient Feature Distillation for Zero-shot Annotation Object Detection

We propose a new setting for detecting unseen objects called Zero-shot Annotation object Detection (ZAD).

MS COCO

MS COCO

LVIS

LVIS

PASCAL VOC 2007

PASCAL VOC 2007

MSCOCO

MSCOCO

RF100

RF100